Boumediene Hamzi: Toward an Algorithmic Theory of Machine Learning via Kernel Methods скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Boumediene Hamzi: Toward an Algorithmic Theory of Machine Learning via Kernel Methods в качестве 4k

У нас вы можете посмотреть бесплатно Boumediene Hamzi: Toward an Algorithmic Theory of Machine Learning via Kernel Methods или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Boumediene Hamzi: Toward an Algorithmic Theory of Machine Learning via Kernel Methods в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Boumediene Hamzi: Toward an Algorithmic Theory of Machine Learning via Kernel Methods

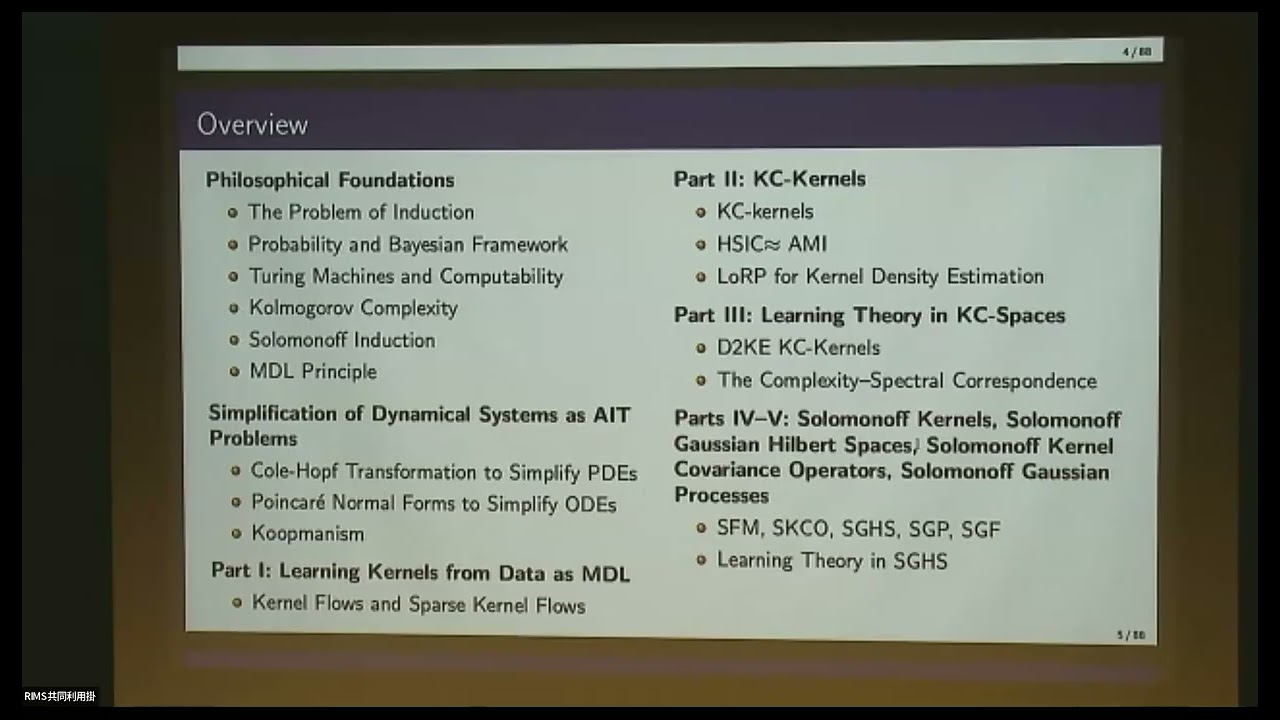

Title: Toward an Algorithmic Theory of Machine Learning: Why Compression and Learning Are Two Sides of the Same Coin Speaker: Boumediene Hamzi (Caltech and The Alan Turing Institute) Short video summary (generated by NotebookLM): • Bridging ML & AIT: Toward an Algorithmic T... Abstract: Algorithmic Information Theory (AIT) and kernel-based machine learning have traditionally evolved as separate disciplines: AIT formalizes universal inductive bias through program-length priors and Kolmogorov complexity, while kernel methods provide the geometric and spectral foundations of modern statistical learning. This talk introduces a unified theory establishing that compression and learning are two sides of the same coin, with reproducing kernels serving as the computational interface between algorithmic priors and statistical inference. Through a series of papers, we develop this programme across complementary layers: 1. Supervised Learning via MDL (Part~I) We show that learning a kernel via Sparse Kernel Flows is a Minimum Description Length (MDL) problem: cross-validation is replaced by code length, and model selection becomes selection of the simplest hypothesis consistent with the data. https://www.sciencedirect.com/science... 2. Unsupervised Learning via KC-Kernels (Part~II). We introduce Kolmogorov complexity-based kernels (KC-kernels) constructed from algorithmic dissimilarities--Normalized Compression Distance (NCD), Conditional Kolmogorov Complexity (CKC), and Algorithmic Mutual Information (AMI). These enable MDL-based clustering and density estimation via the Loss Rank Principle, while demonstrating that kernel quantities like HSIC approximate algorithmic dependence measures--establishing a bidirectional bridge between AIT and kernel methods. https://www.sciencedirect.com/science... 3. The Complexity--Spectral Correspondence (Part~III). We introduce the Kolmogorov $\varepsilon$-complexity $K_\varepsilon(f)$--the length of the shortest prefix-free program outputting a function within $\varepsilon$ of $f$--and prove matching upper and lower bounds relating it to RKHS geometry via Mercer spectra. Remarkably, the three universal regimes of classical learning theory also emerge--polynomial, plateau, and super-smooth--each dictating distinct complexity growth laws. The Distance-to-Kernel Embedding (D2KE) construction transforms KC-kernels into bona fide positive semidefinite kernels, enabling their rigorous integration into kernel machinery. https://www.researchgate.net/publicat... 4. Solomonoff Operators and Gaussian Structures (Part~IV). Parts~I--III remain fundamentally deterministic; Part~IV introduces the missing probabilistic layer, enabling compression-based Bayesian inference. We introduce the Solomonoff Feature Mixture (SFM), a master kernel template encoding Occam-weighted algorithmic similarity. The associated Solomonoff Kernel Covariance Operator (SKCO) satisfies the fundamental law $\lambda_i \asymp 2^{-\ell_i}$: program length dictates spectral weight, which controls learning capacity. This induces Solomonoff Gaussian Processes (SGPs)--computable surrogates to universal induction--and the Solomonoff Gaussian Hilbert Space (SGHS), where regularity corresponds to compressibility rather than smoothness. We further extend the framework to Solomonoff Gaussian Fields (SGFs) for modeling over general index sets, with applications to spatial processes, dynamical systems identification, and graph-structured data. https://www.researchgate.net/publicat... 5. Learning Theory in Solomonoff Spaces (Part~V). We derive minimax-optimal rates for kernel ridge regression with Solomonoff kernels and develop a complete theory of spectral collapse--the phenomenon where feature redundancy causes the fundamental law $\lambda_i \asymp 2^{-\ell_i}$ to fail. We prove that principled feature selection recovers near-optimal rates, with learning guarantees expressed through spectral mass rather than individual eigenvalue decay. The resulting framework provides a ``Rosetta Stone'' translating between algorithmic complexity and the spectral language of kernel learning, together with a practical pipeline for complexity-aware machine learning. More broadly, this programme opens the door to a complexity-based reformulation of machine learning, in which compressibility replaces smoothness as the fundamental organizing principle, inviting further exploration into compression-driven architectures, algorithmic priors for deep learning, and the broader question of whether all successful learning algorithms are, at heart, discovering short descriptions of data.