BitNet (1-bit Transformer) Explained in 3 Minutes! скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: BitNet (1-bit Transformer) Explained in 3 Minutes! в качестве 4k

У нас вы можете посмотреть бесплатно BitNet (1-bit Transformer) Explained in 3 Minutes! или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон BitNet (1-bit Transformer) Explained in 3 Minutes! в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

BitNet (1-bit Transformer) Explained in 3 Minutes!

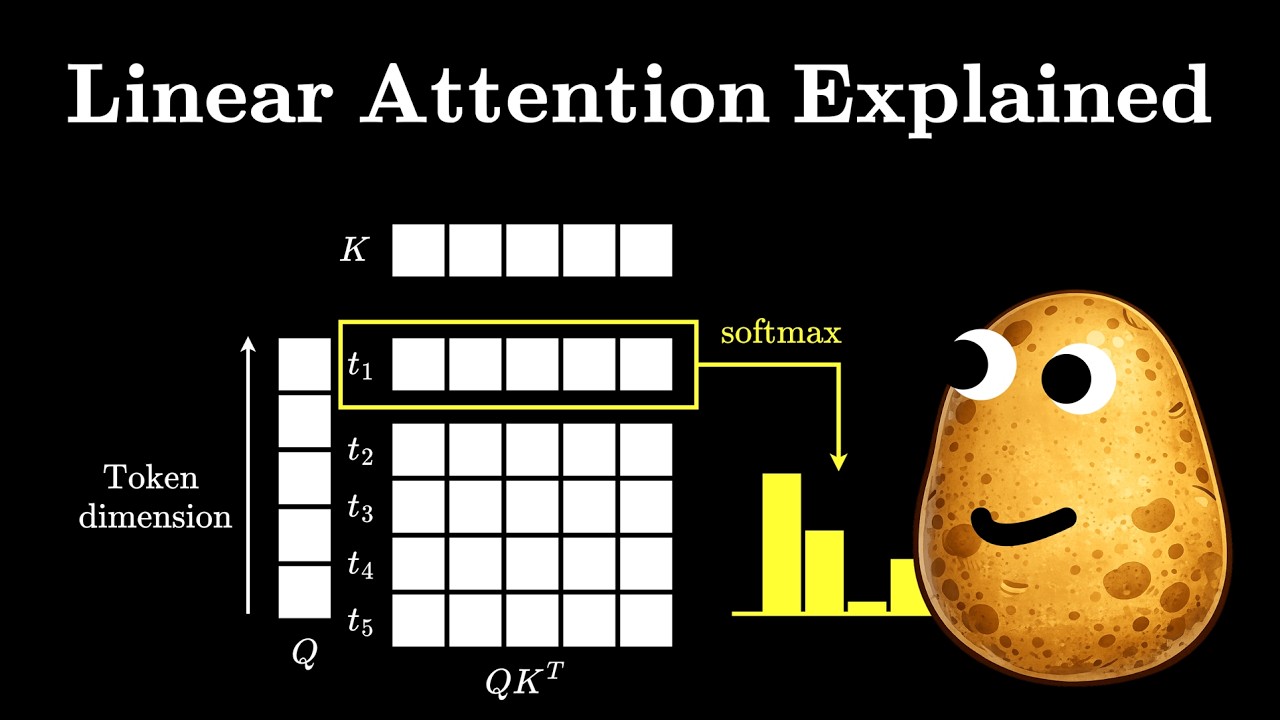

Transformers have hit a wall. As we scale to trillions of parameters, the bottleneck is no longer just "intelligence", it’s the massive energy, memory, and compute cost of traditional 16-bit floating-point math. Enter BitNet. In this video, we explore why 1-bit training (specifically BitNet 1.58b) is a fundamental shift in how we build AI. Instead of compressing models after they are trained, BitNet introduces "Quantization-Aware Training," allowing models to reach state-of-the-art performance using only ternary weights (-1, 0, 1). 🔍 What We Cover: The Scaling Problem: Why FP16/FP32 is becoming a hardware nightmare. Post-Training vs. Training-Aware: Why most compression tricks fail at low bit-widths. The BitLinear Layer: How BitNet replaces expensive matrix multiplication with simple addition. Stability Secrets: The role of Latent Weights and Straight-Through Estimators (STE). Hardware Efficiency: Why this leads to massive energy savings and faster inference. 📄 Referenced Papers: BitNet: Scaling 1-bit Transformers for Large Language Models The Era of 1-bit LLMs: All Large Language Models are in 1.58 Bits (BitNet 1.58b) Enjoyed the breakdown? Subscribe for more deep dives into the architecture of the future. #BitNet #machinelearning #AI #transformers #llms #quantization #1BitAI #deeplearning #artificialintelligence #BitNet158 #techexplained