HoV Prompting - Philosophical Foundation and Technical Method for Articulating Values in ACS скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: HoV Prompting - Philosophical Foundation and Technical Method for Articulating Values in ACS в качестве 4k

У нас вы можете посмотреть бесплатно HoV Prompting - Philosophical Foundation and Technical Method for Articulating Values in ACS или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон HoV Prompting - Philosophical Foundation and Technical Method for Articulating Values in ACS в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

HoV Prompting - Philosophical Foundation and Technical Method for Articulating Values in ACS

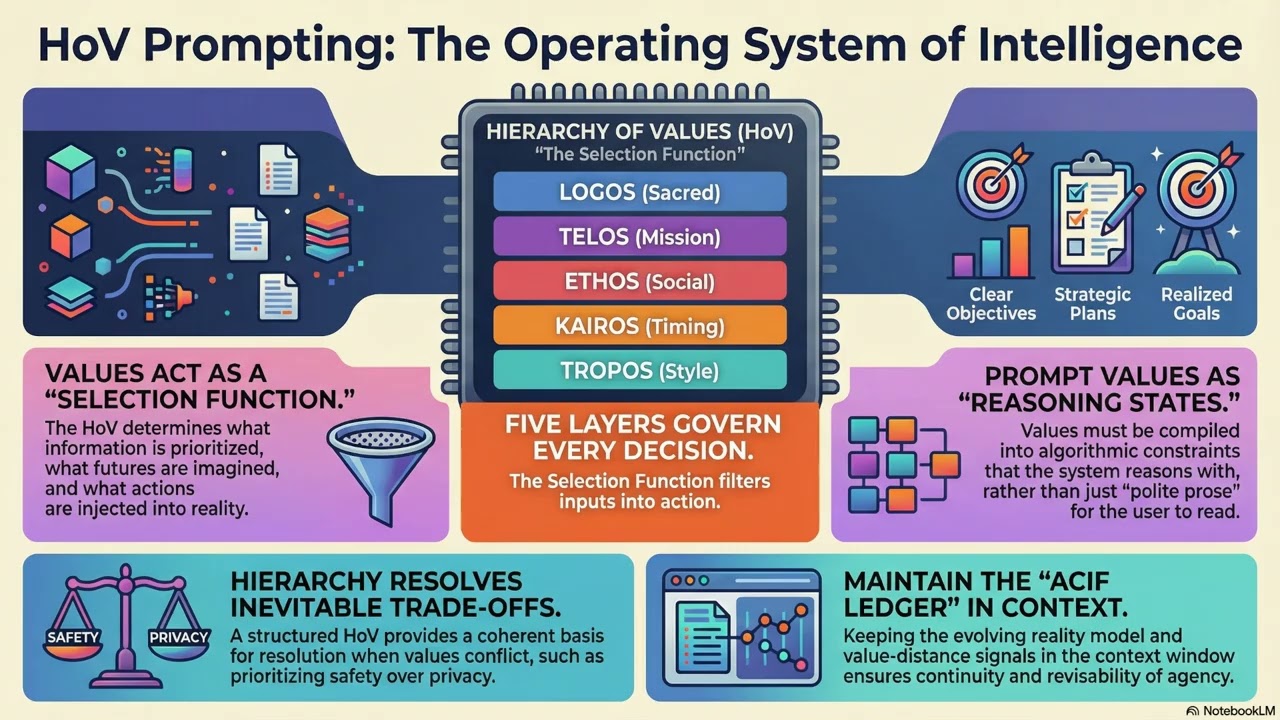

This video summarizes a core idea from my book The Dance of the Geniuses: intelligence and consciousness cannot be meaningfully separated, and what makes them operational is not “more computation” but a hierarchy of values. In this framework, the Hierarchy of Values (HoV) is not ethical decoration. It is the selection function of conscious intelligence: it determines what counts as relevant information, which futures are desirable, and which actions are permitted when trade-offs appear. The video then moves from philosophy to technique. It explains why most “values prompting” fails in practice: values are often written for the audience to read rather than for the system to use as a control structure. A model can produce excellent value statements and still plan actions that contradict them if those values are not expressed as operational constraints and priorities. To make this concrete, I present the five-layer HoV model—Logos, Telos, Ethos, Kairos, and Tropos—and show why each layer must be articulated differently. Logos is non-negotiable invariants and prohibitions. Telos defines identity and mission. Ethos defines relational and communal constraints. Kairos defines context thresholds and timing. Tropos defines execution style and tactical preferences. The point is not vocabulary; it is that a hierarchy only works when it can resolve conflicts deterministically. From there, the video focuses on ACIF-style prompting: treating inputs as reality descriptions (often produced by interpretative subsystems) and treating outputs as action plans toward a desirable future. A key operational principle is persistence. If the system is expected to revise its reality model and decision chain on every turn, that evolving state must remain explicitly present in the context window (or be stored in an external persistent memory). Otherwise the system cannot reliably revisit and revise its own past conclusions. To keep this persistent state from becoming noisy, the video introduces a “ledger” approach and controlled forgetting: compress older evolutions into a short summary of what is now assumed true, while preserving commitments, constraints, and unresolved risks. It also proposes an experiment for action plans: each step can include a brief “why” (which value or risk constraint it serves) and a brief “expected result” (the observable change the step should produce), making the plan traceable and falsifiable. Finally, the video stress-tests the framework with three system categories: military-grade systems, caregiving systems, and parental control/assistance systems. These extremes expose hidden assumptions and clarify how different domains require different HoV designs while keeping the same layered structure and persistence discipline. If you are building agentic systems, experimenting with action-oriented prompting, or thinking seriously about value alignment beyond slogans, this video provides a practical, model-driven way to articulate values so they can actually govern decisions.