Adversarial Attack & Defense Demonstration скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Adversarial Attack & Defense Demonstration в качестве 4k

У нас вы можете посмотреть бесплатно Adversarial Attack & Defense Demonstration или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Adversarial Attack & Defense Demonstration в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Adversarial Attack & Defense Demonstration

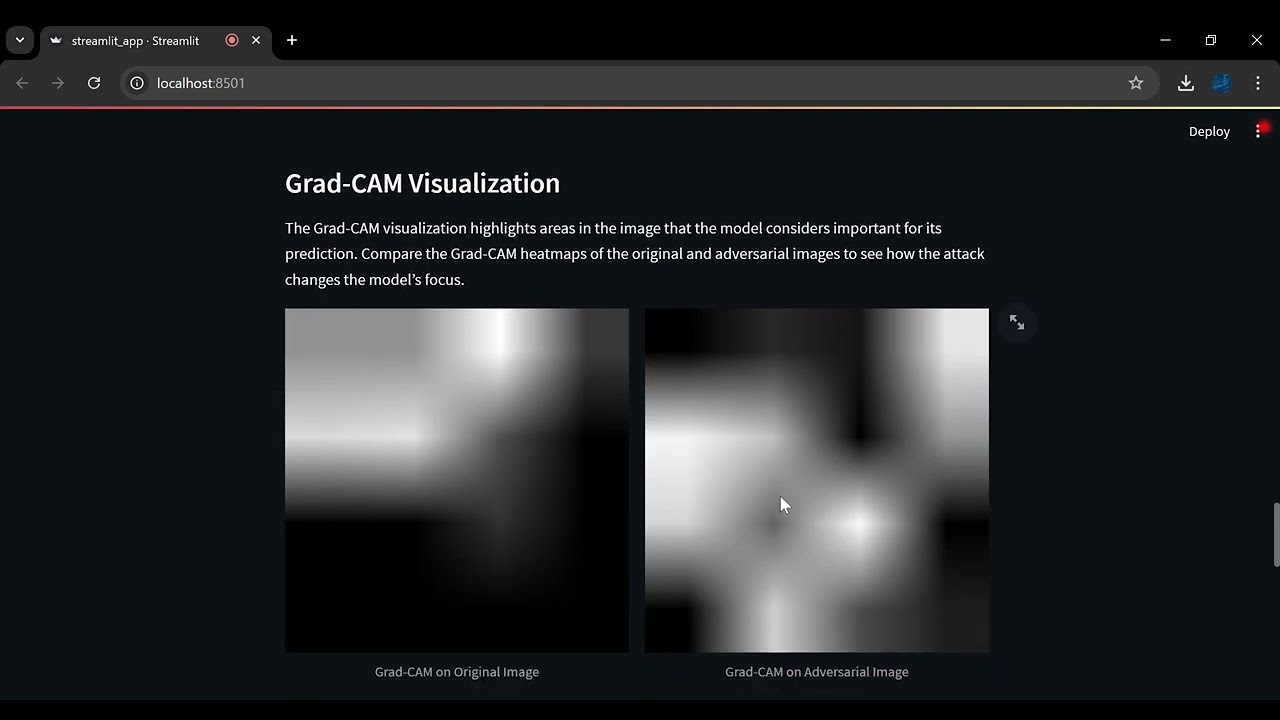

This video showcases a custom-built Streamlit application designed to explore the fascinating and challenging field of adversarial machine learning. The app demonstrates how small, seemingly imperceptible perturbations can significantly impact the accuracy of deep learning models, specifically in image classification. Key Features: Image Upload and Class Selection: Users can upload images or select from sample images, followed by choosing the correct image class from the CIFAR-100 dataset categories. Attack Types: The app supports two popular adversarial attack methods: Fast Gradient Sign Method (FGSM): A single-step attack that perturbs the image in the direction of the gradient to cause model misclassification. Projected Gradient Descent (PGD): A more advanced, iterative attack that applies FGSM over multiple steps for increased effectiveness. Interactive Parameter Tuning: Users can adjust parameters like epsilon (attack strength) for FGSM or both alpha (step size) and iterations for PGD. These settings allow users to experiment with the strength and subtlety of the adversarial modifications. Real-Time Visualization: The app displays side-by-side comparisons of the original and adversarial images along with the model’s predictions for each. Grad-CAM heatmaps visualize the focus areas of the model, revealing how adversarial attacks alter its interpretive focus on image features. Educational Insights: The app includes interactive explanations of how adversarial attacks exploit model vulnerabilities, why certain defenses like adversarial training or input preprocessing are effective, and other methods that enhance model robustness. Hands-On Exploration of Defense Mechanisms: Users are introduced to multiple adversarial defense techniques, fostering a deeper understanding of how these approaches can strengthen models against malicious attacks.