HT - Testing AI-Generated Code: How BMAD’s Test Engineering Agent Turns “It Runs” into “It’s Ready” скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: HT - Testing AI-Generated Code: How BMAD’s Test Engineering Agent Turns “It Runs” into “It’s Ready” в качестве 4k

У нас вы можете посмотреть бесплатно HT - Testing AI-Generated Code: How BMAD’s Test Engineering Agent Turns “It Runs” into “It’s Ready” или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон HT - Testing AI-Generated Code: How BMAD’s Test Engineering Agent Turns “It Runs” into “It’s Ready” в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

HT - Testing AI-Generated Code: How BMAD’s Test Engineering Agent Turns “It Runs” into “It’s Ready”

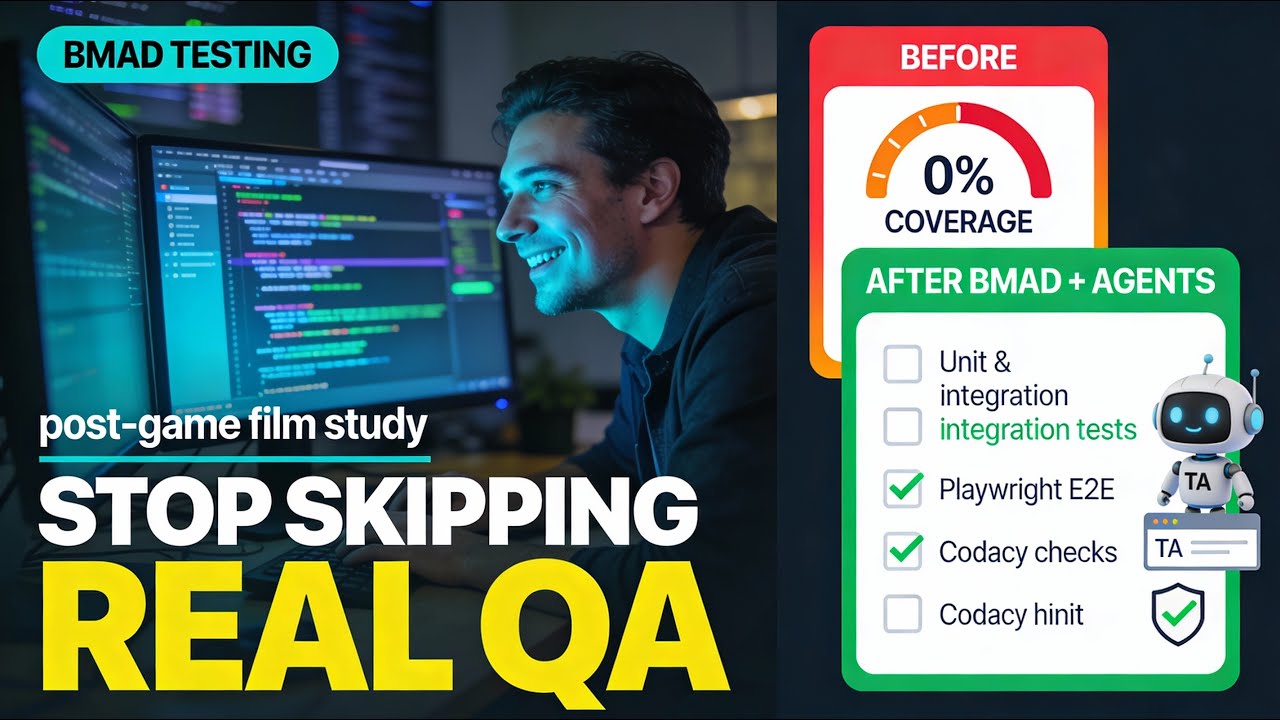

Testing is where a lot of AI-generated code quietly falls apart—in this episode, we bring a Test Engineering Agent into the BMAD mix and show what it really takes to make testing first-class. What this video covers Handing a BMAD-built trivia app over to the Test Engineering Agent to stand up a real testing setup, not just “it compiles, ship it.” Catching the basics many of us skip: virtual environment setup, dependency isolation, and wiring in the right Python libraries and tools. Seeing the agent configure the testing framework, add missing pieces, and prepare the repo for serious test coverage. From “let agents build” to “trust but verify” Why letting agents generate code is fast, but reviewing and understanding that code is where humans earn their keep. How to think about BMAD agents like ambitious first-year interns: powerful, eager, but needing constraints, guardrails, and human oversight. Where additional markdown files and conventions (test framework, coverage expectations, tool integration) can guide better testing behavior from day one. Closing the test coverage gap Reviewing the architecture and discovering the uncomfortable truth: zero unit tests, zero integration tests, zero system tests. Asking the Test Engineering Agent to work with the Dev agent to design and implement appropriate test coverage. Using Codacy as a “third set of eyes” to flag security vulnerabilities, library issues, and quality problems that tests alone might miss. Running the tests (and learning from the tape) Watching the test suite execute in the console and treating it like post-game film: what went well, what surprised, and what to change next time. Turning this brownfield experience into a checklist for future greenfield BMAD projects: what to configure up front so testing isn’t an afterthought. Framing all of this as an evolution in how we build with AI agents, from raw speed to more deliberate, risk-aware engineering. Why this matters now How better testing and coverage reduce risk when you’re shipping AI-assisted code with real users and real data. Why this moment in software—agents, IDE integration, and evolving guardrails—is as exciting (and disruptive) as the early days of the web. An invitation to use BMAD, testing agents, and tools like Codacy as your own lab for learning how to ship safer AI-powered software. If you’ve ever wondered how to move from “the agent generated it” to “I trust this in production,” this testing-focused BMAD episode is your behind-the-scenes walkthrough—brought to you by Tim Unscripted.

![Best of Deep House [2026] | Melodic House & Progressive Flow](https://imager.clipsaver.ru/Il-ZpBuC8tA/max.jpg)