By Tying Embeddings You Are Assuming the Distributional Hypothesis --- ICML 2024 скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: By Tying Embeddings You Are Assuming the Distributional Hypothesis --- ICML 2024 в качестве 4k

У нас вы можете посмотреть бесплатно By Tying Embeddings You Are Assuming the Distributional Hypothesis --- ICML 2024 или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон By Tying Embeddings You Are Assuming the Distributional Hypothesis --- ICML 2024 в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

By Tying Embeddings You Are Assuming the Distributional Hypothesis --- ICML 2024

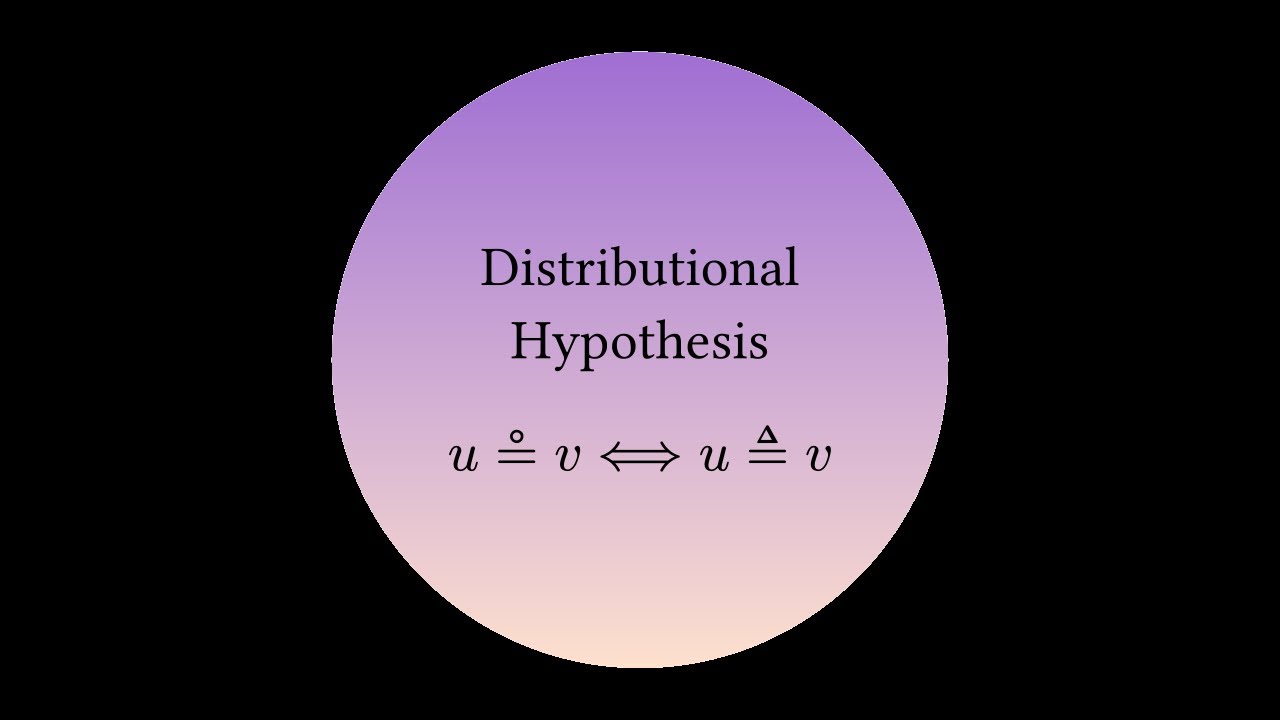

Hello everyone! Welcome to my first video on this channel. I'm excited to discuss our paper that was accepted as a spotlight presentation (top 3.5% = (144+191)/9473) at the ICML 2024 conference. We feel incredibly fortunate for this recognition. In this video, I've aimed to present our research as clearly and intuitively as possible. I hope you find it both informative and engaging. Special thanks to my colleague Luca Favalli for providing the voiceover. You can explore some of his research at https://scholar.google.com/citations?user=.... ========== Contacts & Pages ========== If you're interested in my work, here's how you can connect with me:: X (formerly Twitter): https://x.com/f14bertolotti Email: f14.bertolotti@gmail.com Google Scholar: https://scholar.google.com/citations?user=... Instagram: https://www.instagram.com/f14.bertolotti/ (if you want to see some of my drawings) Website: https://homes.di.unimi.it/bertolotti =========== Paper Details =========== Authors: Francesco Bertolotti and Walter Cazzola Abstract: In this work, we analyze both theoretically and empirically the effect of tied input-output embeddings—a popular technique that reduces the model size while often improving training. Interestingly, we found that this technique is connected to Harris (1954)’s distributional hypothesis—often portrayed by the famous Firth (1957)’s quote “a word is characterized by the company it keeps”. Specifically, our findings indicate that words (or, more broadly, symbols) with similar semantics tend to be encoded in similar input embeddings, while words that appear in similar contexts are encoded in similar output embeddings (thus explaining the semantic space arising in input and output embedding of foundational language models). As a consequence of these findings, the tying of the input and output embeddings is encouraged only when the distributional hypothesis holds for the underlying data. These results also provide insight into the embeddings of foundation language models (which are known to be semantically organized). Further, we complement the theoretical findings with several experiments supporting the claims. Keywords: Embeddings, Language Modeling, Weight Tying, Distributional Hypothesis For more details, please visit: https://openreview.net/forum?id=yyYMAprcAR