FireGNN: Neuro-Symbolic GNN with Trainable Fuzzy Rules | NeurIPS 2025 Workshops | Prajit Sengupta скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: FireGNN: Neuro-Symbolic GNN with Trainable Fuzzy Rules | NeurIPS 2025 Workshops | Prajit Sengupta в качестве 4k

У нас вы можете посмотреть бесплатно FireGNN: Neuro-Symbolic GNN with Trainable Fuzzy Rules | NeurIPS 2025 Workshops | Prajit Sengupta или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон FireGNN: Neuro-Symbolic GNN with Trainable Fuzzy Rules | NeurIPS 2025 Workshops | Prajit Sengupta в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

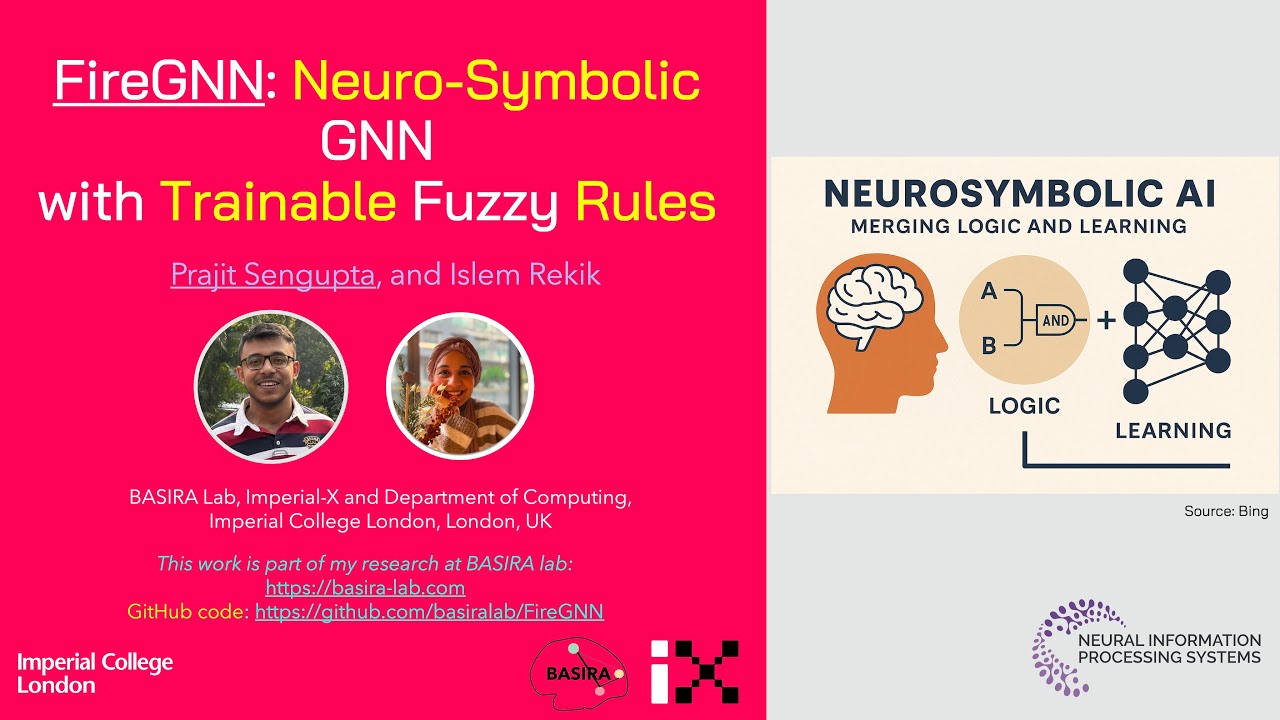

FireGNN: Neuro-Symbolic GNN with Trainable Fuzzy Rules | NeurIPS 2025 Workshops | Prajit Sengupta

#XAI #GNN #FuzzyLogic #MICCAI2025 #ExplainableAI 👉 arXiv link: [https://arxiv.org/abs/2508.xxxxx](https://arxiv.org/abs/2509.10510) 👉 Code link: [https://github.com/basiralab/FireGNN](https://github.com/basiralab/FireGNN) 🔥 FireGNN: Interpretable Graph Learning with Trainable Fuzzy Rules 🔥 Meet FireGNN — the first graph neural network that embeds trainable fuzzy rules directly into its architecture for medical image classification. It delivers strong predictive performance and interpretable, rule-based explanations — crucial for clinical trust. Why this matters GNNs capture relational patterns in medical data, but most act as black boxes, limiting transparency in high-stakes decisions. FireGNN bridges this gap by introducing fuzzy symbolic reasoning within the GNN itself, making its predictions explainable by design. What’s broken today Standard GNNs lack intrinsic interpretability Post-hoc explanations are often inconsistent or misleading Clinical models need human-understandable reasoning for real adoption How FireGNN works (at a glance) 1. Fuzzy rules as logic modules: Learnable thresholds and sharpness parameters capture node degree, clustering coefficient, and label agreement. 2. Intrinsic symbolic reasoning: Rules are integrated directly into message passing for interpretable decisions. 3. Auxiliary self-supervised tasks: Tasks like homophily prediction and similarity entropy benchmark topological learning. Results 🔥 Evaluated on five MedMNIST benchmarks + MorphoMNIST 🔥 Achieves competitive accuracy and rule-based interpretability 🔥 Demonstrates transparent, topology-aware reasoning What this enables A step toward trustworthy, interpretable AI in clinical graph learning — where reasoning and prediction go hand in hand. --- 🎓 Highlight: Presented at MICCAI 2025 🔗 Code: [https://github.com/basiralab/FireGNN](https://github.com/basiralab/FireGNN) 🎥 More videos / playlist: [ / @basiralab ]( / @basiralab ) If you find this helpful, please like, share, and subscribe! Keywords: explainable AI, interpretable GNN, fuzzy logic, graph neural networks, medical imaging, symbolic reasoning, topology-aware learning, MedMNIST, MorphoMNIST, XAI, MICCAI #XAI #ExplainableAI #GNN #FuzzyLogic #InterpretableML #MedicalImaging #GraphNeuralNetworks #MICCAI #MedMNIST #MorphoMNIST #FireGNN

![[Paper Journey] Part 4 — Paper Submission, Response to Reviews and Rebuttal | MICCAI Case study](https://imager.clipsaver.ru/2WtCsqf_rHk/max.jpg)

![Data-Aware and Noise-Informed Flow Matching for Solving Inverse Problems [FoDS, AIMS, 2026]](https://imager.clipsaver.ru/aVnvfXrS0Oo/max.jpg)