Technical Insights from Amazon’s AI Shopping Assistant with Sandeep Shukla скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Technical Insights from Amazon’s AI Shopping Assistant with Sandeep Shukla в качестве 4k

У нас вы можете посмотреть бесплатно Technical Insights from Amazon’s AI Shopping Assistant with Sandeep Shukla или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Technical Insights from Amazon’s AI Shopping Assistant with Sandeep Shukla в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Technical Insights from Amazon’s AI Shopping Assistant with Sandeep Shukla

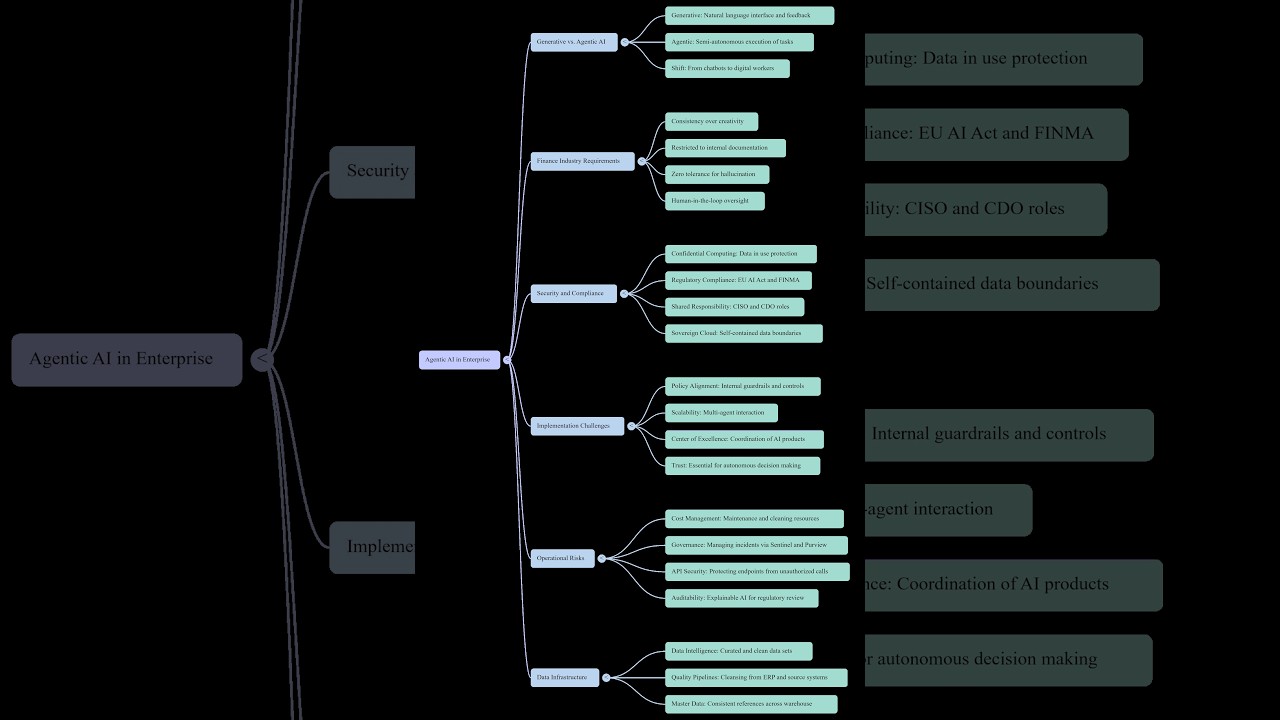

In this episode, I sit down with Sandeep Shukla, a Software Development Manager at Amazon who leads the teams building Rufus, Amazon’s generative AI shopping assistant. We dive deep into the technical realities of moving from simple chatbots to complex, agentic AI systems that handle real-time shopping discovery. Sandeep breaks down the "Context Wall," the challenges of bidirectional streaming within a mobile app's performance boundaries, and why he tells his senior engineers to treat AI agents like interns. Please note: The views and opinions expressed in this conversation are Sandeep’s own and do not necessarily reflect the official policy or position of his employer. What we cover: The "Context Wall": Why managing conversation history and tool-calling is the biggest technical hurdle for agentic workflows. Latency vs. Experience: Strategies for achieving sub-two-second response times using pre-computation and caching. The Knowledge Gap: Why senior engineers struggle to delegate to AI and how to fix it by providing organizational context. The agentcontext.md Framework: A practical hack for improving the quality of AI-generated PRs and speeding up team onboarding. Discovery over Search: How LLMs are transforming e-commerce by moving beyond keyword searches to nuanced, conversational discovery. Whether you're an engineering leader or a developer trying to pivot into Generative AI, this conversation provides a roadmap for building production-grade AI products at scale. Timestamps: 0:00 - Introduction to Rufus and the goal of AI Discovery 1:48 - The "Context Wall": Moving from Chatbots to Agents 4:00 - Improving recommendations: Smart models vs. Context 8:01 - Scaling AI: Managing Latency and bidirectional streaming 11:57 - Infrastructure costs and caching strategies 17:22 - The Skill Gap: Why senior engineers struggle with GenAI 23:46 - The agentcontext.md hack for high-quality code 28:38 - Final thoughts: Staying effective in a fast-moving ecosystem #GenAI #LLM #AmazonRufus #SoftwareEngineering #AIWorkflows #TechInsights