Powering a Petabyte-Scale Cache: Uber’s Alluxio Implementation скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Powering a Petabyte-Scale Cache: Uber’s Alluxio Implementation в качестве 4k

У нас вы можете посмотреть бесплатно Powering a Petabyte-Scale Cache: Uber’s Alluxio Implementation или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Powering a Petabyte-Scale Cache: Uber’s Alluxio Implementation в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Powering a Petabyte-Scale Cache: Uber’s Alluxio Implementation

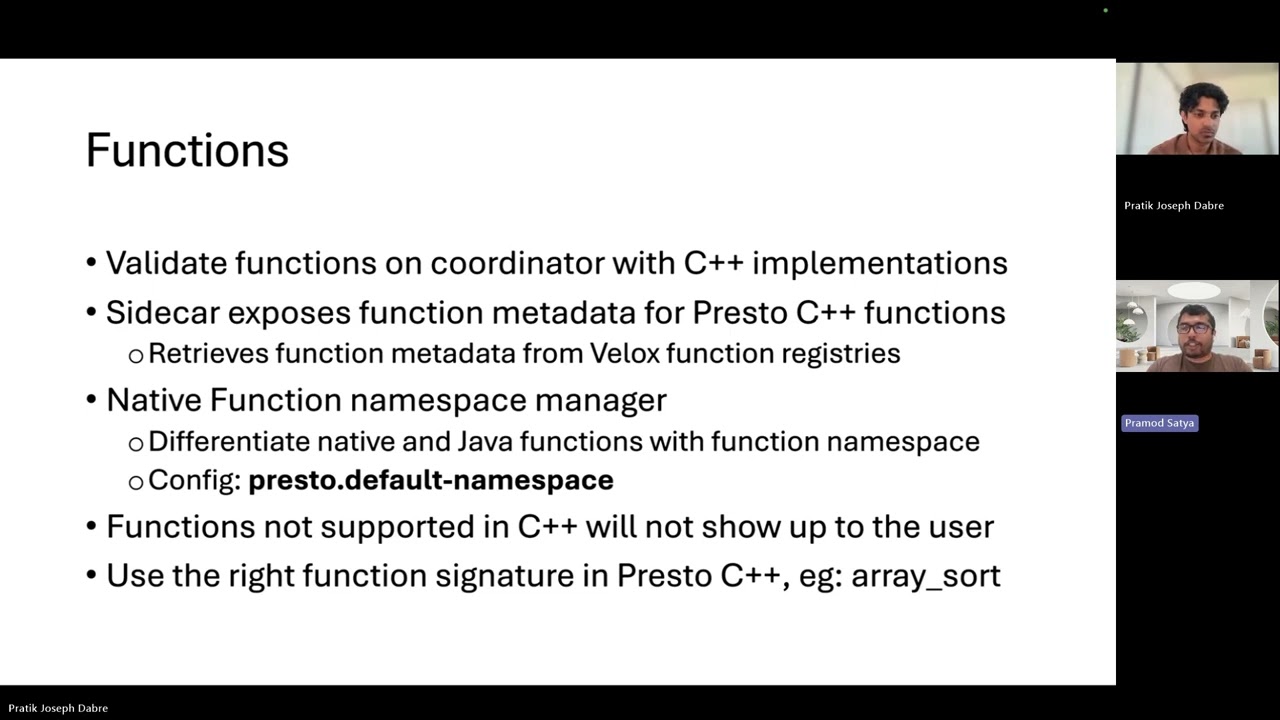

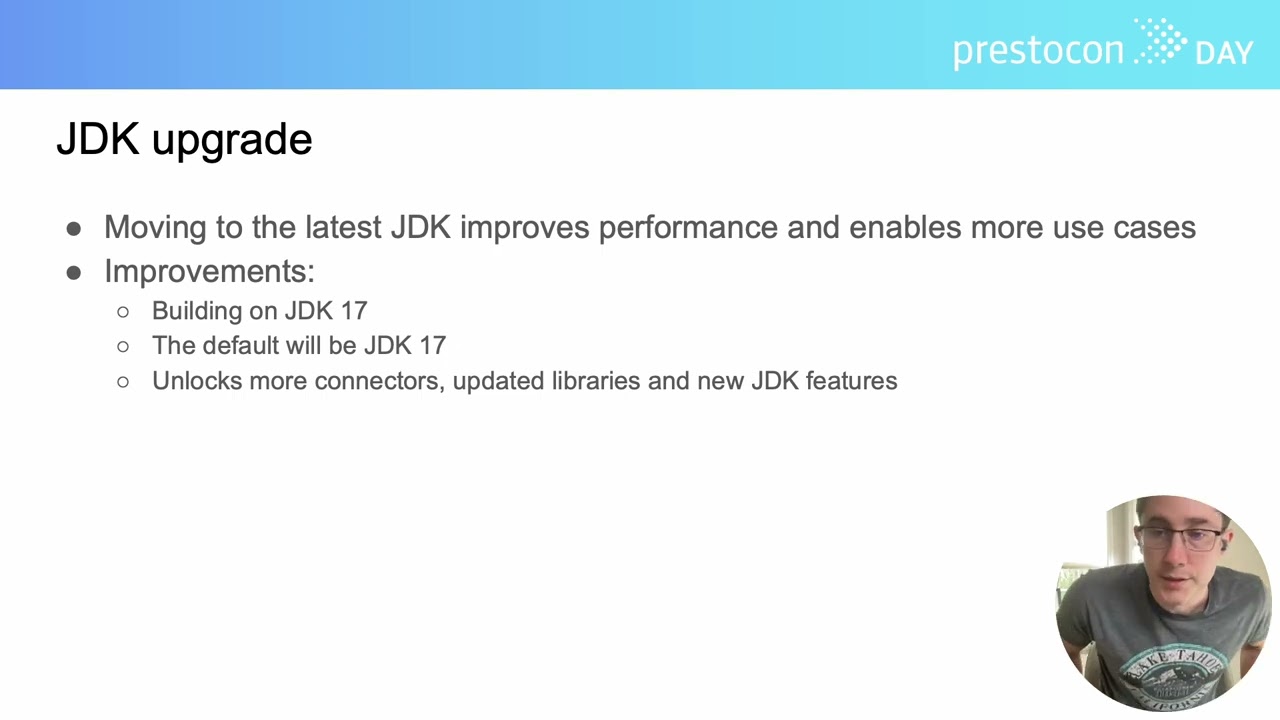

At Uber, Presto is a critical engine for interactive analytics, processing hundreds of thousands of queries and scanning hundreds of petabytes of data daily. To meet the immense demands for low-latency queries and high reliability, Uber advanced its Alluxio deployment by engineering key architectural enhancements for greater scalability and reliability. This customized Alluxio system forms the backbone of our distributed remote caching layer, managing a cache size scaling from 3 to 4 petabytes. This talk will delve into Uber’s strategies for achieving 99.99% cache reliability with this enhanced system, featuring robust client fallback mechanisms and the use of consistent hashing to maintain efficiency during cluster scaling. A significant outcome of this implementation is substantial egress bandwidth savings from underlying storage, which is particularly crucial for performance and cost efficiency during peak hours. We will share insights into managing these large-scale cache clusters, highlighting our adaptive cache filter that has been instrumental in achieving over 80% cache hit rates and optimizing resource utilization. Attendees will learn tangible benefits, best practices for leveraging Alluxio with Presto in high-throughput environments, and key takeaways for deploying a similar high-performance caching solution.