Create a RAG Application with BigQuery GSP1289 скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Create a RAG Application with BigQuery GSP1289 в качестве 4k

У нас вы можете посмотреть бесплатно Create a RAG Application with BigQuery GSP1289 или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Create a RAG Application with BigQuery GSP1289 в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

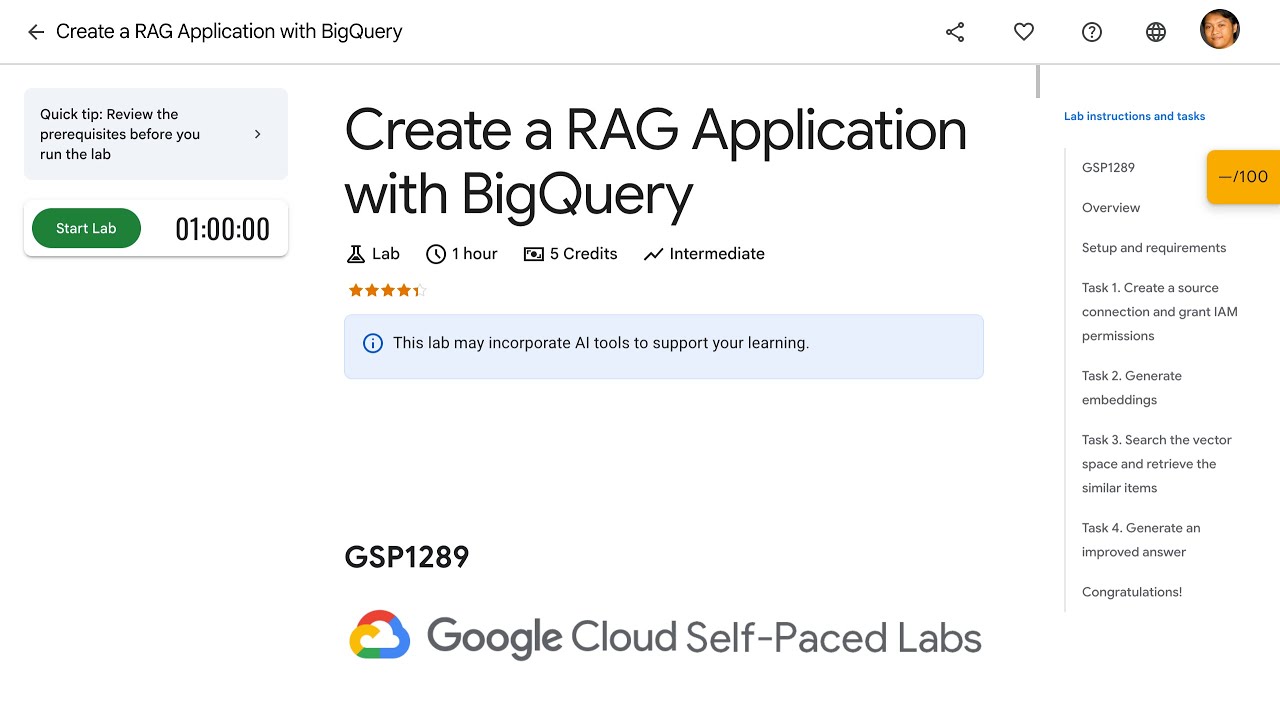

Create a RAG Application with BigQuery GSP1289

Overview Concerned about AI hallucinations? While AI can be a valuable resource, it sometimes generates inaccurate, outdated, or overly general responses - a phenomenon known as "hallucination." This lab teaches you how to implement a Retrieval Augmented Generation (RAG) pipeline to address this issue. RAG improves large language models (LLMs) like Gemini by grounding their output in contextually relevant information from a specific dataset. Assume you are helping Coffee-on-Wheels, a pioneering mobile coffee vendor, analyze customer feedback on its services. Without access to the latest data, Gemini's responses might be inaccurate. To solve this problem, you decide to build a RAG pipeline that includes three steps: 1. Generate embeddings: Convert customer feedback text into vector embeddings, which are numerical representations of data that capture semantic meaning. 2. Search vector space: Create an index of these vectors, search for similar items, and retrieve them. 3. Generate improved answers: Augment Gemini with the retrieved information to produce more accurate and relevant responses. BigQuery allows seamless connection to remote generative AI models on Vertex AI. It also provides various functions for embeddings, vector search, and text generation directly through SQL queries or Python notebooks. #gcp #googlecloud #qwiklabs #learntoearn