Replicability in Learning скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Replicability in Learning в качестве 4k

У нас вы можете посмотреть бесплатно Replicability in Learning или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Replicability in Learning в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Replicability in Learning

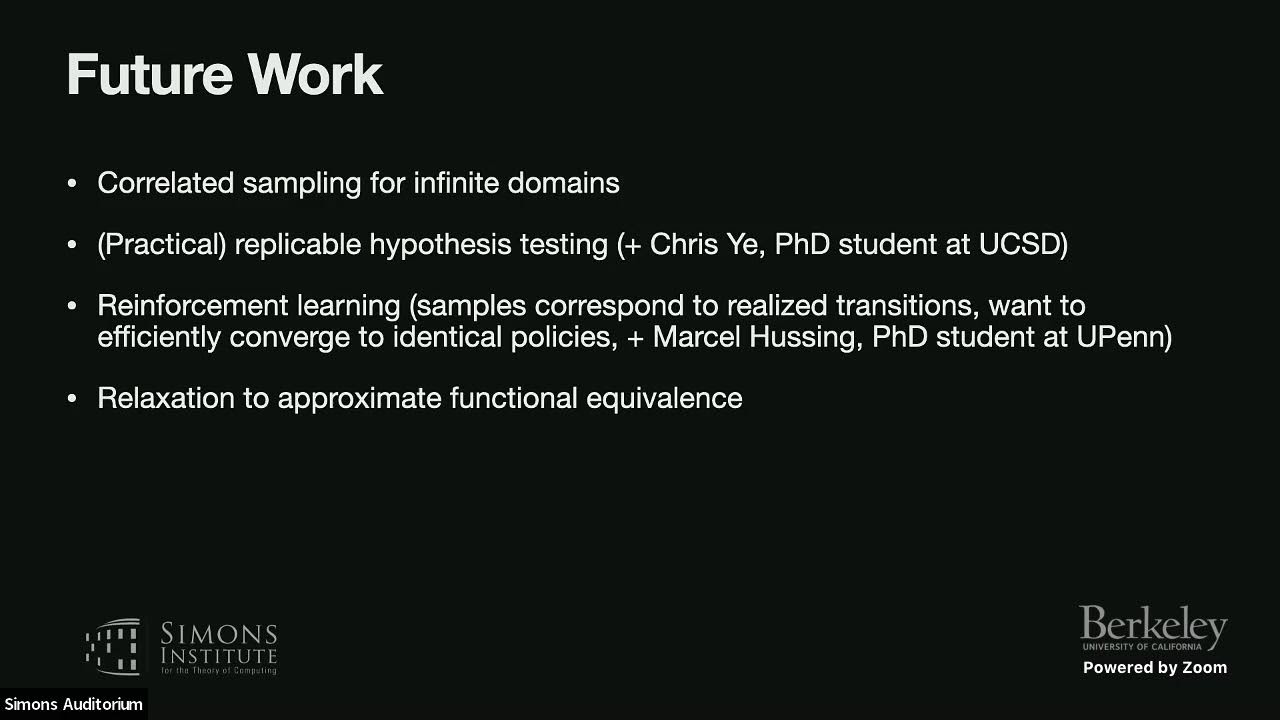

Jessica Sorrell (UCSD) https://simons.berkeley.edu/talks/rep... Lower Bounds, Learning, and Average-Case Complexity Abstract Replicability is vital to ensuring scientific conclusions are reliable, but failures of replicability have been a major issue in nearly all scientific areas of study in recent decades. A key issue underlying the replicability crisis is the explosion of methods for data generation, screening, testing, and analysis, where, crucially, only the combinations producing the most significant results are reported. Such practices (also known as p-hacking, data dredging, and researcher degrees of freedom) can lead to erroneous findings that appear to be significant, but that don’t hold up when other researchers attempt to replicate them. In this talk, we introduce a new notion of replicability for randomized algorithms. This notion ensures that with high probability, an algorithm returns exactly the same output when run with two samples from the same distribution. Despite the exceedingly strong demand of replicability, there are efficient replicable algorithms for several fundamental problems in statistics and learning, including statistical queries, approximate heavy-hitters, medians, and halfspaces. We also discuss connections to other well-studied notions of algorithmic stability, such as differential privacy. This talk is based on work with Mark Bun, Marco Gaboardi, Max Hopkins, Russell Impagliazzo, Rex Lei, Toniann Pitassi, and Satchit Sivakumar.