Build an ADAS System: Lane Detection & Mapping with YOLO | Autonomous Driving AI скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Build an ADAS System: Lane Detection & Mapping with YOLO | Autonomous Driving AI в качестве 4k

У нас вы можете посмотреть бесплатно Build an ADAS System: Lane Detection & Mapping with YOLO | Autonomous Driving AI или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Build an ADAS System: Lane Detection & Mapping with YOLO | Autonomous Driving AI в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Build an ADAS System: Lane Detection & Mapping with YOLO | Autonomous Driving AI

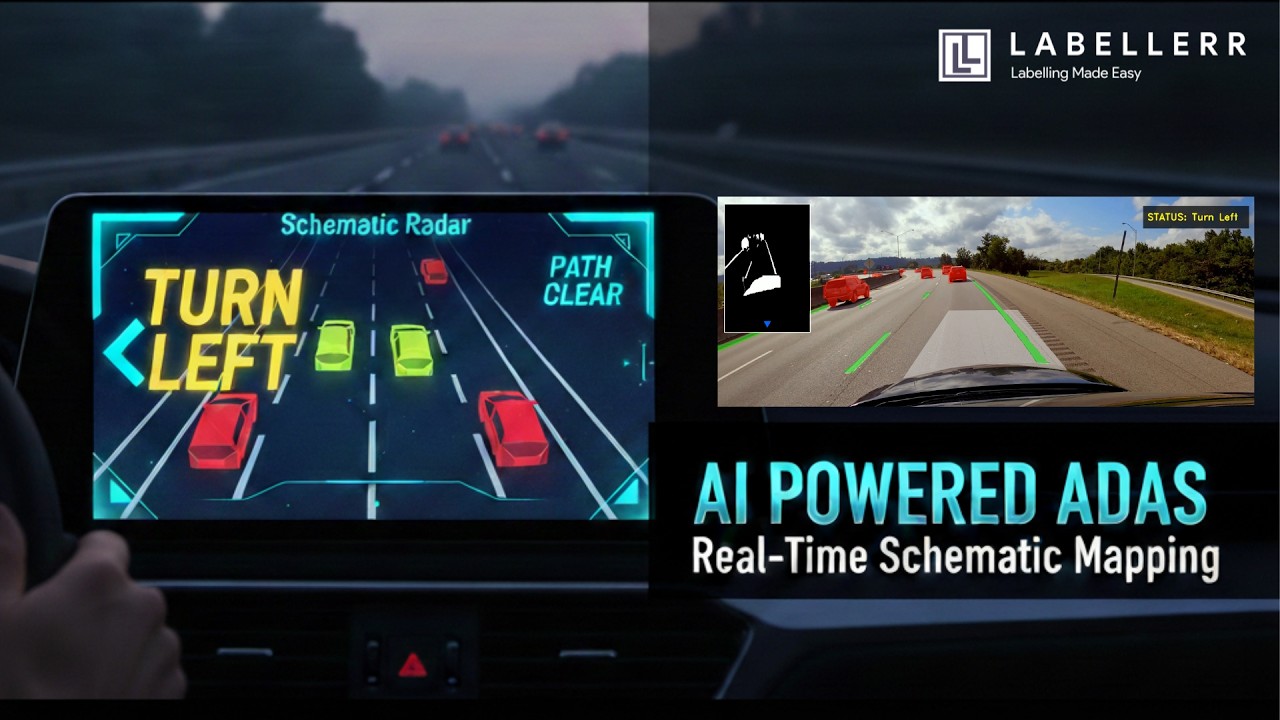

In this video, I showcase my latest computer vision project: Advanced Vision-Based ADAS with Real-Time Schematic Mapping. Advanced Driver-Assistance Systems (ADAS) are the future of road safety, using AI to monitor vehicle surroundings. My implementation takes this to the next level by leveraging the YOLO11x instance segmentation model for high-fidelity perception. Key Features of this Project: Real-Time 4K Inference: Processing UHD video at high resolution for maximum accuracy. Schematic Radar HUD: A custom-coded digital twin interface that filters out environmental noise. Directional Steering Logic: An 8-point safety perimeter that triggers "Turn Left" or "Turn Right" signals based on lane drift analysis. Spatial Mapping: Using OpenCV to bridge the gap between pixel masks and actionable driving commands. This project was developed using Python, OpenCV, and the Ultralytics YOLO11 framework, with model training performed on high-performance cloud GPUs. Cookbook: https://github.com/Labellerr/Hands-On... Github: https://github.com/Labellerr chapters 0:00 Introduction: AI-Powered ADAS with Real-Time Schematic Mapping 0:35 Video Overview: Building a Lane Keeping & Vehicle Detection System 1:03 Project Goals: Lane Drift Prevention & Collision Avoidance 1:19 Key Features: Multi-Class Segmentation, Sensor Zone & HUD 1:52 Technical Stack: YOLO 11X for Instance Segmentation 2:39 Step 1: Dataset Preparation - Highway Driving Video 3:24 Step 2: Extracting 50 Frames for Annotation 3:55 Step 3: Annotating on Labeler Platform - Cars, Trucks & Lane Lines 4:18 Key Technique: Grouping Dash Lines into Single Lane Objects 5:38 Step 4: Exporting Annotations & Converting to YOLO Format 6:12 Step 5: Training YOLO 11X Model for Segmentation 6:36 Step 6: Defining the Sensor Zone for Lane Departure Warning 7:14 Marking the Sensor Zone on a Sample Frame 7:54 Logic: X-Coordinate Comparison for Left/Right Drift Alerts 8:38 Step 7: Running Inference with Lane Departure Logic 8:58 Results: Real-Time Lane Drift Detection & Steering Guidance 10:36 Step 8: Creating the Schematic Radar HUD (Noise-Filtered View) 11:48 Results: Clean HUD Showing Only Lanes, Vehicles & Borders 12:52 Conclusion & Additional Resources Interested in learning more about our services? Website: https://www.labellerr.com Book a Demo: https://www.labellerr.com/book-a-demo Find us on Social Media Platforms: LinkedIn: / labellerr Twitter: https://x.com/Labellerr1 #adashorts #ComputerVision #YOLO11 #AutonomousDriving #ObjectDetection #OpenCV #SelfDrivingCars #ArtificialIntelligence #DeepLearning #PythonProgramming #SmartMobility #MachineLearning #TechShowcase #AIProjects #InstanceSegmentation #RoadSafety #DigitalTwin #FutureOfTransport #NeuralNetworks #PyTorch