Doc-to-LoRA: Learning to Instantly Internalize Contexts скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Doc-to-LoRA: Learning to Instantly Internalize Contexts в качестве 4k

У нас вы можете посмотреть бесплатно Doc-to-LoRA: Learning to Instantly Internalize Contexts или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Doc-to-LoRA: Learning to Instantly Internalize Contexts в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Doc-to-LoRA: Learning to Instantly Internalize Contexts

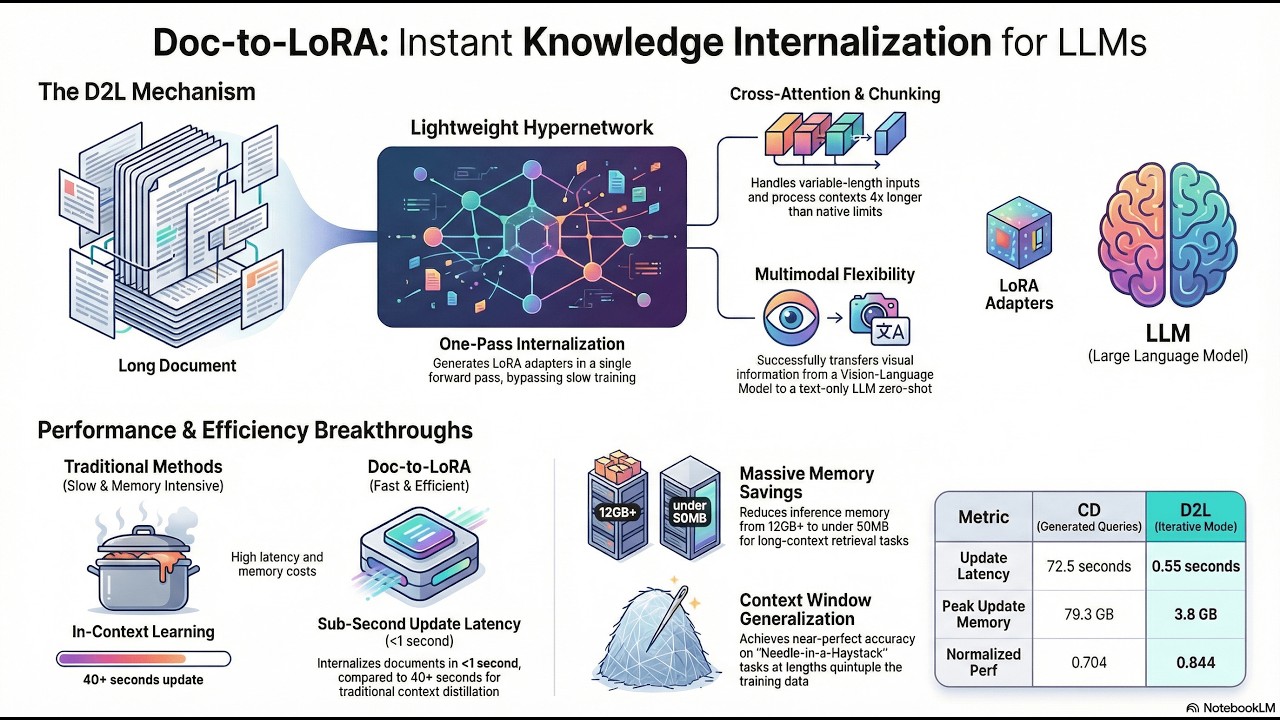

Doc-to-LoRA: Learning to Instantly Internalize Contexts This document introduces Doc-to-LoRA (D2L), a novel method designed to efficiently internalize information into Large Language Models (LLMs) from long input sequences. Traditional approaches like In-Context Learning (ICL) suffer from quadratic attention costs, leading to high memory usage and latency, while Context Distillation (CD) is slow and memory-intensive, making per-prompt distillation impractical. D2L addresses these limitations by employing a lightweight hypernetwork that meta-learns to perform approximate CD in a single forward pass. Upon receiving a context, D2L instantly generates a context-specific LoRA adapter for a target LLM, enabling the model to answer subsequent queries without needing to re-consume the original long input. This process significantly reduces inference latency and KV-cache memory consumption. Utilizing a Perceiver architecture with a chunking mechanism, D2L can handle variable and extremely long input lengths, generalizing to contexts four times longer than the target LLM's native window, even when trained on much shorter sequences. Empirical results demonstrate that D2L outperforms standard CD under limited computational resources, offering improved internalization efficiency and significantly lower update latency and memory usage, thereby facilitating rapid LLM adaptation and knowledge updates. #DocToLoRA #LLM #ContextLearning #Hypernetwork #LoRAAdapters #ContextDistillation #AI #MachineLearning #Efficiency #LongContext Donats: / luxak paper - https://arxiv.org/abs/2602.15902 subscribe - https://t.me/arxivpaper created with NotebookLM