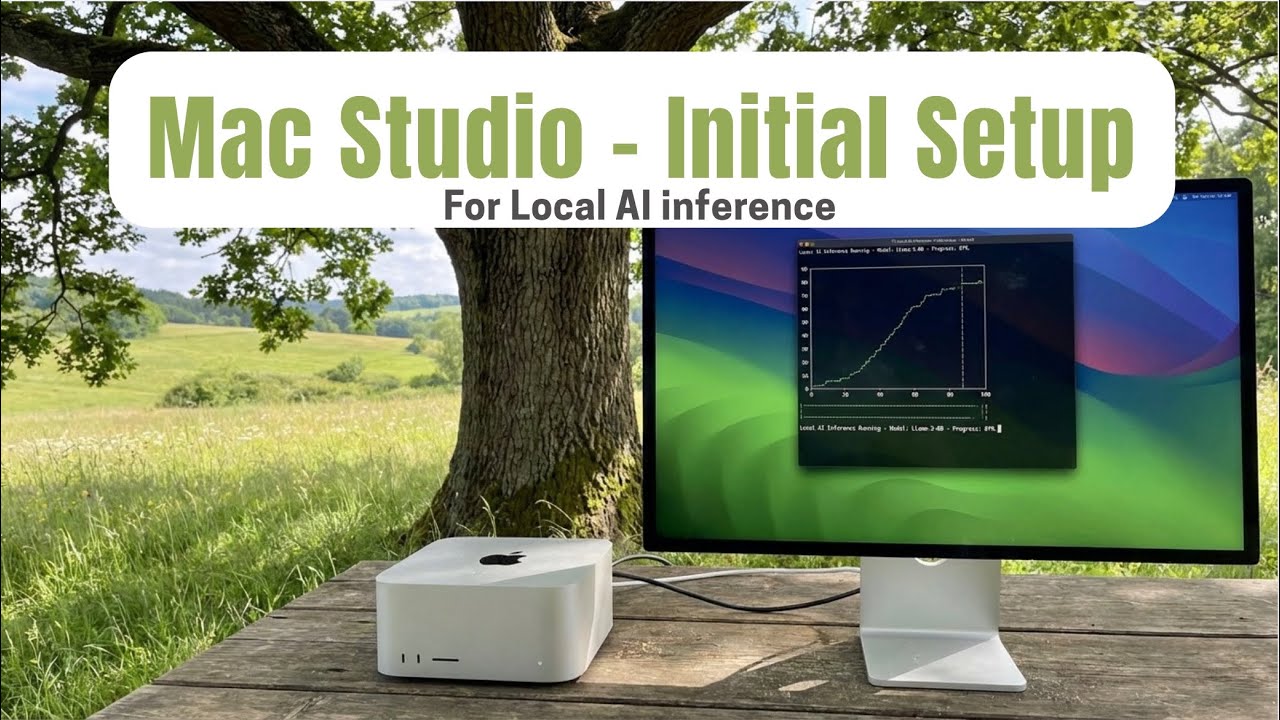

Setting Up My Mac Studio for Local AI (Models, UPS & Gotchas) скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Setting Up My Mac Studio for Local AI (Models, UPS & Gotchas) в качестве 4k

У нас вы можете посмотреть бесплатно Setting Up My Mac Studio for Local AI (Models, UPS & Gotchas) или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Setting Up My Mac Studio for Local AI (Models, UPS & Gotchas) в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Setting Up My Mac Studio for Local AI (Models, UPS & Gotchas)

Ordered a custom Mac Studio for serious local AI work… and Apple delivered it a week early before I even had a UPS ready. In this video, I walk through my full setup: power backup planning, the sneaky 16A vs 6A plug issue, the first OLLaMA models I installed, and real performance numbers. If you’re planning to run local LLMs on a Mac Studio, this will save you time (and a bit of pain). #### ⏱️ Timestamps / Chapters 00:00 – Apple delivers the Mac Studio early (before the UPS!) 00:28 – Why I needed a serious local AI box 00:39 – Ordering a custom Mac Studio online (no discounts, long ETA) 01:13 – UPS requirements & using AI to shortlist the right unit 01:35 – The 16A vs 6A outlet “gotcha” and the adapter fix 01:53 – Initial setup: installing OLLaMA + 5 local AI models 02:11 – GPT-OS 20B/120B, Qwen 32B, Gemma 27B, DeepSeek 70B performance 02:29 – Benchmarks caveats & what’s coming in the next video (network exposure) #### About this video This video is the second episode in my Mac Studio AI setup series. I share the practical, non-glam side of getting a custom Mac Studio ready for local LLM workloads: choosing a UPS with the right runtime and auto-shutdown support, dealing with incompatible power plugs, and then installing OLLaMA plus several large open-source models. I also show actual performance numbers for GPT-OS 20B/120B, Qwen 32B, Gemma 27B, and DeepSeek 70B on my setup. In the next episode, I’ll go deeper into how I expose my OLLaMA setup over the network (and potentially the internet), including options, trade-offs, and conflicts you should watch out for. --- UPS: https://www.amazon.in/dp/B00B2LA7JK?r... 6 to 16 Amp plug: https://www.amazon.in/dp/B07F9PZMKL?r... #MacStudio #LocalAI #LLM #Ollama #AIEngineering #AIDevelopment #HomeLab #MacForDevelopers #AIWorkbench #OpenSourceAI #DeepSeek #Qwen #Gemma #AIBenchmarks #UpsBackup