3DBodyTech2025 - Dynamic Body-Garment Simulation to Characterise Wearable Activity скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: 3DBodyTech2025 - Dynamic Body-Garment Simulation to Characterise Wearable Activity в качестве 4k

У нас вы можете посмотреть бесплатно 3DBodyTech2025 - Dynamic Body-Garment Simulation to Characterise Wearable Activity или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон 3DBodyTech2025 - Dynamic Body-Garment Simulation to Characterise Wearable Activity в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

3DBodyTech2025 - Dynamic Body-Garment Simulation to Characterise Wearable Activity

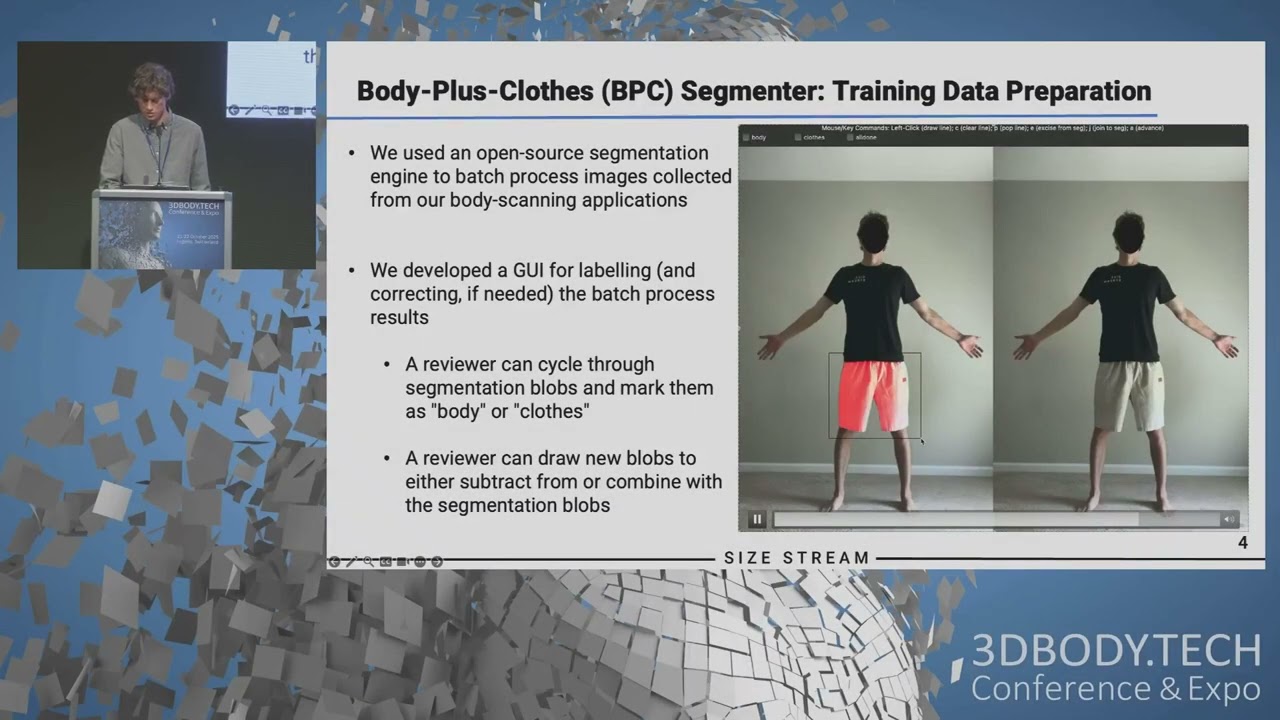

Dynamic Body-Garment Simulation to Characterise Wearable Activity Recognition Performance - 25.40 Extract of Presentation of Lars Ole HAEUSLER, University of Freiburg at 3DBODY.TECH 2025 Full paper at https://proc.3dbody.tech/abstracts/20... Full video available in the proceedings Abstract: We propose an application of 4D modelling of human body and clothing to estimate the performance of wearable inertial sensors. While inertial sensors, e.g. accelerometers and gyroscopes, can be embedded into garments to capture activities of daily living (ADLs) of their wearer, clothes may move differently and have different orientations than the human body, potentially reducing human activity recognition (HAR) performance. In practice, it is challenging to estimate all error conditions that garments may introduce to wearable sensors, due to their varying body fit and sensor positioning. Thus, empirical evaluations provide limited insight into HAR performance in uncontrolled conditions. Recent scientific advancements in 4D surface modelling may offer a novel simulation approach to estimate HAR performance for cloth-embedded wearable inertial sensors. Approaches so far have primarily used body surface models to simulate body-attached inertial sensors, and thus did not account for the additional movement dynamics of garments. For example, a smartphone captures different signal patterns for activities, including walking, sitting, or jumping, when placed in a loose-fitting trousers pocket rather than a tight-fitted belt pocket. The goal of this work is to combine 4D garment and body surface models with inertial sensor models in a joint simulation approach that delivers HAR performance estimations ahead of any physical implementation of the wearable system. We employ textual ADL descriptions as specifications with a generative human motion model to obtain motion patterns. Subsequently, we use 3D Skinned Multi-Person Linear (SMPL) models parametrised for different body sizes to represent full volumetric body and garment motion. We place virtual inertial measurement units (vIMUs) at well-known positions of body and garment models to demonstrate how the effect of garments can be analysed. By simulating vIMUs in selected ADLs, we synthesise acceleration and angular velocity data, which is used to train a HAR model. To evaluate our approach, we generate synthetic inertial sensor data with and without garment simulations for various garment types and body sizes. We then examine the impact on HAR accuracy across specific ADLs in a public dataset, comparing performances between body and garment sensor mounts, as well as the effects of garment type, size, and the performed activity. Our results show that inertial sensor synthesis is clearly affected by clothing, in particular for loose- fitting garments. We detail HAR performance differences between garment and body-mounts depending on ADLs, body-garment fit, and vIMU positioning. Our approach may offer an alternative to train robust HAR models with synthetic sensor data and deal with clothing-related artefacts.

![Почему взрываются батарейки и аккумуляторы? [Veritasium]](https://imager.clipsaver.ru/a3-3R9zwyGY/max.jpg)