Text-to-LoRA: Zero-Shot LoRA Generation in a Single Forward Pass скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Text-to-LoRA: Zero-Shot LoRA Generation in a Single Forward Pass в качестве 4k

У нас вы можете посмотреть бесплатно Text-to-LoRA: Zero-Shot LoRA Generation in a Single Forward Pass или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Text-to-LoRA: Zero-Shot LoRA Generation in a Single Forward Pass в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Text-to-LoRA: Zero-Shot LoRA Generation in a Single Forward Pass

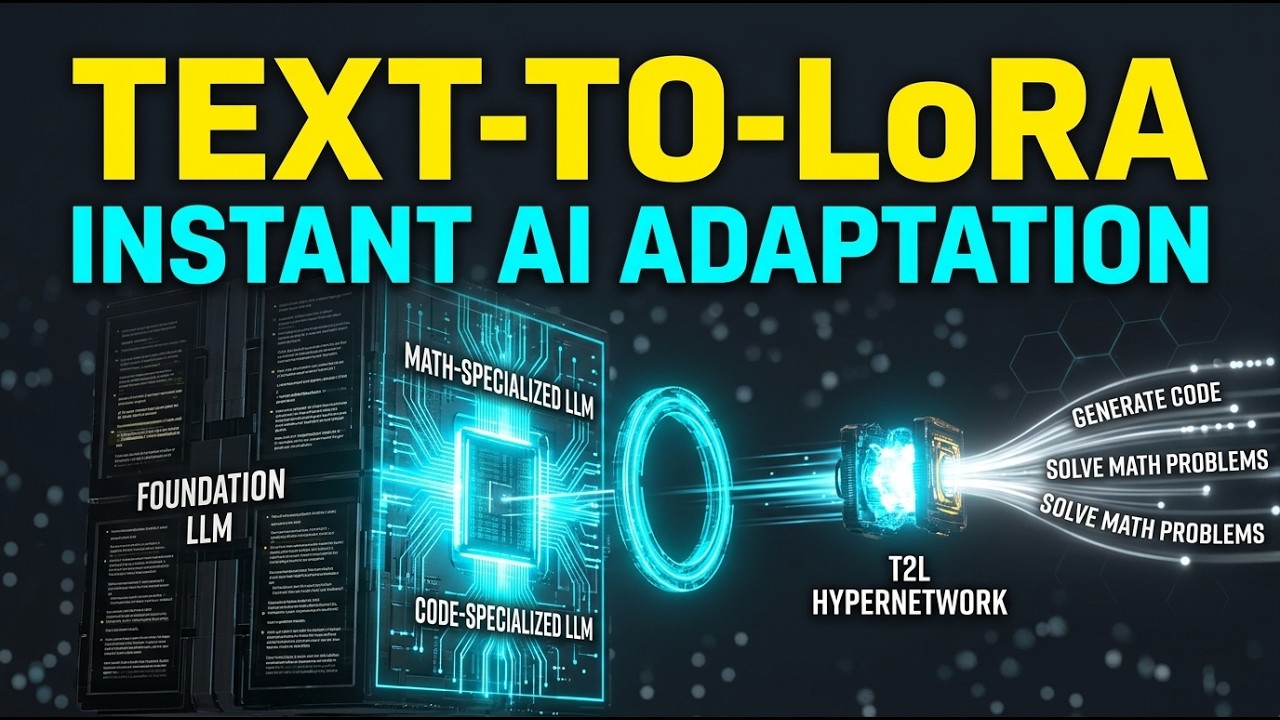

Traditional LLM fine-tuning is resource-heavy. We explore Text-to-LoRA (T2L), a fundamental breakthrough that instantly adapts foundation models using only natural language descriptions—with minimal compute. The Deep Dive While foundation models are incredibly versatile, adapting them to specific tasks typically requires careful dataset curation and expensive, hyperparameter-sensitive fine-tuning processes like Low-Rank Adaptation (LoRA). To solve this bottleneck, researchers have introduced Text-to-LoRA (T2L), a novel hypernetwork architecture capable of generating task-specific LoRA adapters on the fly. Instead of updating weights through lengthy backpropagation, T2L relies solely on a natural language description of the target task to decode new adaptation matrices in a single, inexpensive forward pass. From a compute and architecture perspective, this represents a significant shift. By training T2L via multi-task supervised fine-tuning (SFT) or reconstruction loss across hundreds of datasets, the model learns the underlying adaptation mechanisms shared across different tasks. At inference time, T2L acts as a hypernetwork that projects natural language instructions into the low-rank weight spaces (A and B matrices) of the base transformer. Notably, this drastically reduces adaptation overhead; ad-hoc FLOPs analyses demonstrate that T2L requires substantially less compute than standard few-shot in-context learning, saving immense processing power from the very first query. The real-world implications of this research are substantial. Not only does T2L successfully compress hundreds of individual LoRA instances into a single model, but it also demonstrates an impressive ability to zero-shot generalize to entirely unseen tasks based on intuitive text instructions. This indirect encoding approach provides a critical step toward democratizing the specialization of large language models, allowing researchers and practitioners to mold AI behavior instantly without requiring vast datasets or specialized hardware. Academic Integrity Section Disclaimer: This episode provides a high-level summary and analysis of peer-reviewed research for educational purposes. For complete methodologies, mathematical proofs, and peer-reviewed accuracy, viewers are strongly encouraged to consult the original academic paper linked below. Original Research Paper: https://arxiv.org/pdf/2506.06105 Official Code Repository: https://github.com/SakanaAI/text-to-lora #ScienceResearch #SciPulse #MachineLearning #ArtificialIntelligence #LargeLanguageModels #LoRA #NeuralNetworks #Hypernetworks #ComputeEfficiency #ZeroShotLearning #AIResearch #SakanaAI

![Пожалуй, главное заблуждение об электричестве [Veritasium]](https://imager.clipsaver.ru/6Hv2GLtnf2c/max.jpg)