Demystifying Queries, Keys, and Values in self-attention - Deep Learning (Bibek Chalise) скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Demystifying Queries, Keys, and Values in self-attention - Deep Learning (Bibek Chalise) в качестве 4k

У нас вы можете посмотреть бесплатно Demystifying Queries, Keys, and Values in self-attention - Deep Learning (Bibek Chalise) или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Demystifying Queries, Keys, and Values in self-attention - Deep Learning (Bibek Chalise) в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Demystifying Queries, Keys, and Values in self-attention - Deep Learning (Bibek Chalise)

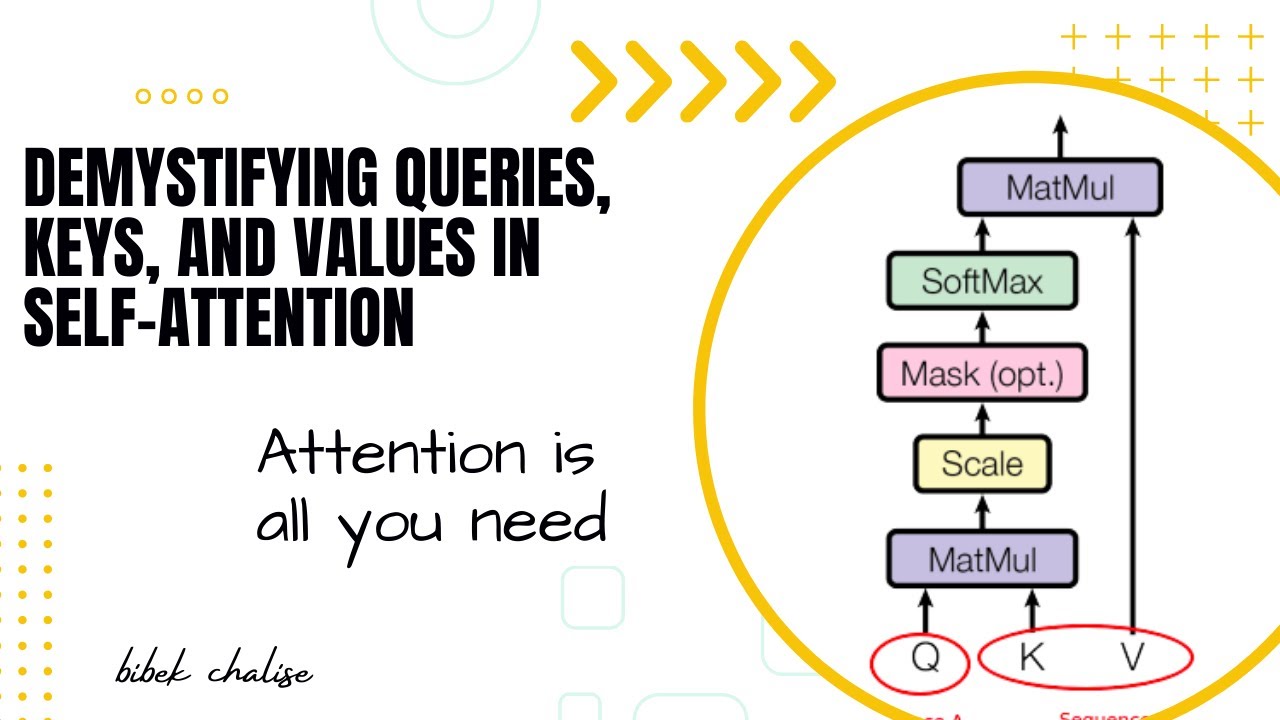

In this enlightening video, we delve into the inner workings of self-attention, focusing specifically on the intriguing concepts of queries, keys, and values. Join us as we demystify these fundamental components of self-attention and unveil their crucial roles in understanding and enhancing the power of attention mechanisms. Self-attention has revolutionized natural language processing and machine learning, enabling remarkable advancements in tasks such as machine translation, text summarization, and sentiment analysis. At the heart of self-attention lies the intricate interplay between queries, keys, and values. Discover how queries, representing the tokens in the input sequence, are transformed to capture specific information and preferences. Uncover the essence of keys, which encode relationships and dependencies between tokens, forming the basis for attention computations. Unravel the significance of values, offering rich contextual information to aid in representation generation. Through intuitive explanations and illustrative examples, we illuminate the step-by-step process of computing attention weights by comparing queries and keys, demonstrating how these weights influence the aggregation of values to create contextually-informed token representations. Whether you're a machine learning enthusiast, a researcher, or simply curious about the inner workings of attention mechanisms, this video equips you with a comprehensive understanding of queries, keys, and values in self-attention. Expand your knowledge, gain insights, and unravel the mysteries behind this powerful mechanism shaping the future of natural language processing. Join us on this enlightening journey as we demystify the intricate components of self-attention and empower you with a deeper comprehension of queries, keys, and values. Don't miss out on this captivating exploration of one of the most transformative concepts in modern machine learning. #deeplearning #transformers #artificialintelligence #machinelearning #ai #datascience #datapreprocessing #technology