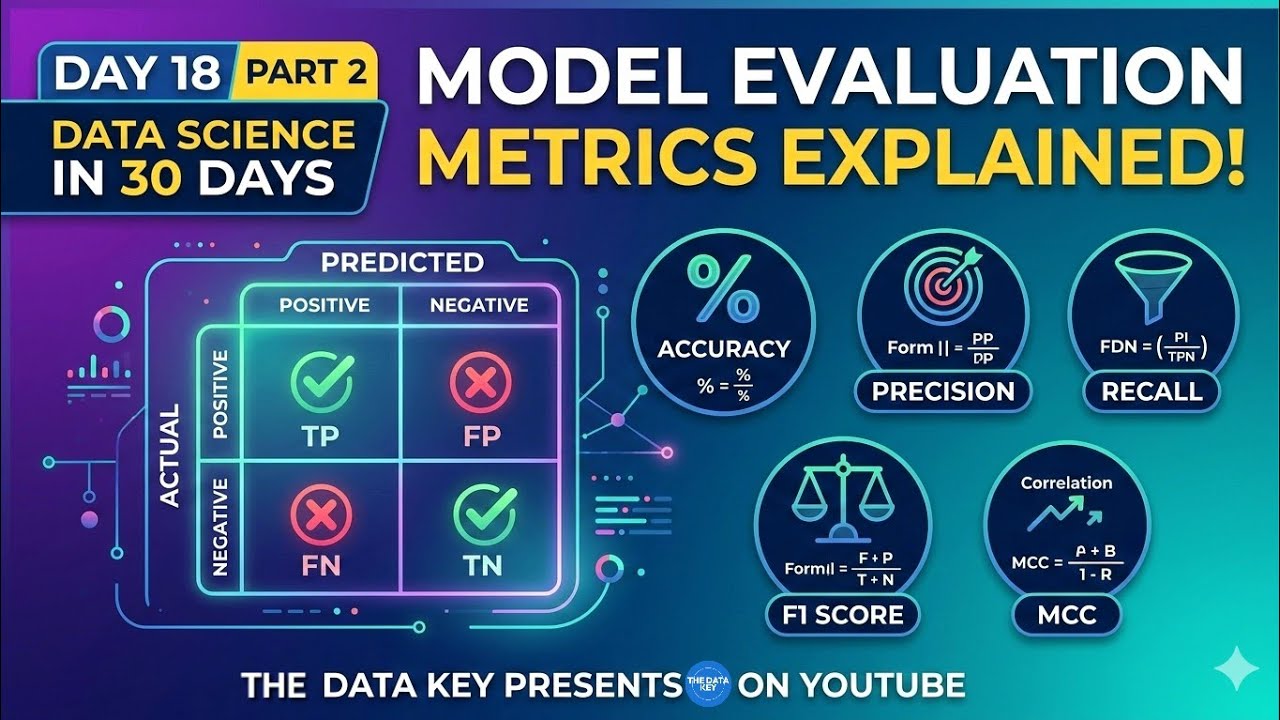

Model Evaluation Metrics Explained | Accuracy, Precision, Recall & F1 Score | Day 18 Part 2 скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Model Evaluation Metrics Explained | Accuracy, Precision, Recall & F1 Score | Day 18 Part 2 в качестве 4k

У нас вы можете посмотреть бесплатно Model Evaluation Metrics Explained | Accuracy, Precision, Recall & F1 Score | Day 18 Part 2 или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Model Evaluation Metrics Explained | Accuracy, Precision, Recall & F1 Score | Day 18 Part 2 в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Model Evaluation Metrics Explained | Accuracy, Precision, Recall & F1 Score | Day 18 Part 2

🚀 Welcome to Day 18 – Part 2 of the series “Data Science in 30 Days” on The Data Key. In this video, we dive deep into Model Evaluation Metrics, one of the most important concepts in Machine Learning and Data Science. After training a machine learning model, it is crucial to evaluate how well the model performs on unseen data. Many beginners rely only on accuracy, but in real-world datasets — especially imbalanced datasets — accuracy alone can be misleading. ---------------------------------------------------------------------------------- In this video, we understand the most important classification evaluation metrics used by data scientists and machine learning engineers: ✔ Confusion Matrix ✔ Accuracy ✔ Precision ✔ Recall (Sensitivity) ✔ F1 Score ✔ Matthews Correlation Coefficient (MCC) You will learn: • What each metric actually measures • How these metrics are calculated • The intuition behind each metric • When to use precision vs recall • Why F1 Score is important for imbalanced datasets • Why MCC is considered one of the best evaluation metrics Understanding these metrics is extremely important for Machine Learning projects, Data Science interviews, and real-world model evaluation. This video will help you build a strong conceptual foundation for evaluating classification models. ---------------------------------------------------------------------------------- 📚 Learning Resources Links : If you want to explore these concepts further, check these resources: 🔸️Confusion Matrix: https://scikit-learn.org/stable/modul... 🔸️Precision, Recall and F1 Score: 🔹️ https://scikit-learn.org/stable/modul... 🔹️ https://scikit-learn.org/stable/modul... 🔹️ https://scikit-learn.org/stable/modul... 🔸️Matthews Correlation Coefficient (MCC): https://scikit-learn.org/stable/modul... 🔸️Google Machine Learning Crash Course: https://developers.google.com/machine... 🔸️Detailed Explanation of Precision & Recall: https://towardsdatascience.com/precis... 🔸️Understanding Evaluation Metrics: https://machinelearningmastery.com/cl... ---------------------------------------------------------------------------------- ⏱️ Timestamps: 00:00 Accuracy Illusion 01:46 The Toolkit: 4 Ways to Be Right/Wrong 03:30 The Confusion Matrix 04:08 The Balancing Act: Precision vs. Recall 06:07 The Solution: The F_1 Score 07:40 The Takeaway: Choosing Your Metric 08:34 When Popular Metrics Fail 09:53 A More Truthful Score: The MCC 12:30 Beyond the Hype: Key Takeaways ---------------------------------------------------------------------------------- 📌 Series Playlist : 📊 Data Science in 30 Days – Complete Playlist • Data Science in 30 Days | Data Science Ful... This series is designed to help beginners learn Data Science step-by-step from scratch. ---------------------------------------------------------------------------------- #datascience #datasciencecourse #machinelearning #machinelearningfullcourse #foryou #modelevaluation #f1 #aivideo #precision #thedatakey #confusionmatrix #mcc #metrics #google #newvideo