PUM2023W 13 Ensemble Models скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: PUM2023W 13 Ensemble Models в качестве 4k

У нас вы можете посмотреть бесплатно PUM2023W 13 Ensemble Models или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон PUM2023W 13 Ensemble Models в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

PUM2023W 13 Ensemble Models

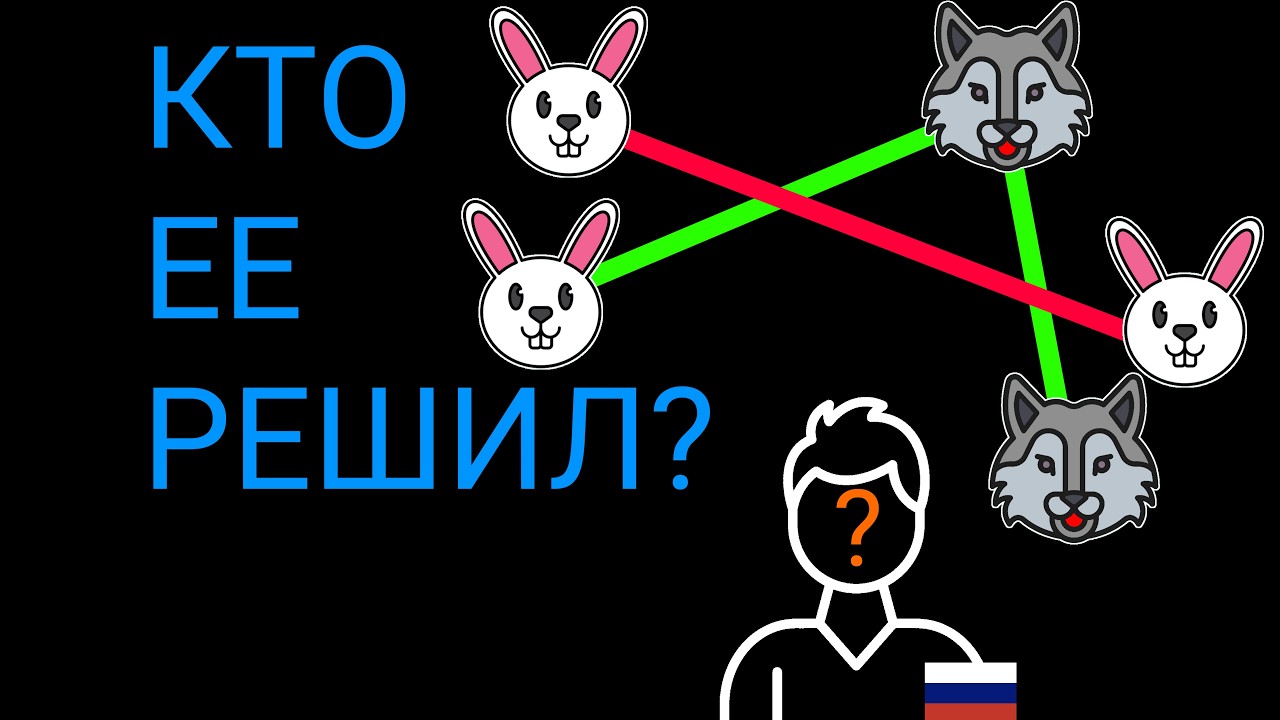

Ensemble Models Bias, Variance, and Model Complexity Model quality is measured by comparing predictions to reality on a test dataset. As model complexity increases, error on the training set stays low, but error on the test set follows a U-shaped curve. We aim for the optimal point between: Bias: Systematic error (underfitting). Variance: Sensitivity to small fluctuations in data (overfitting). The Wisdom of Crowds Ensemble learning is based on the idea that the collective answer of many is more accurate than any single individual. Galton’s Ox: The average of 800 guesses was within 0.1% of the actual weight. Netflix Prize: Top teams won by combining their models, proving that the average of multiple models outperforms the single best one. What is Ensemble Learning? Ensemble learning combines multiple weak learners (base models) to create a strong learner. Homogeneous: All base models use the same algorithm (e.g., all Decision Trees). Heterogeneous: Base models use different algorithms (e.g., a mix of Neural Networks and Logistic Regression). Core Strategies: Parallel vs. Sequential Parallel (Bagging): Reduces variance. Models are trained independently at the same time. Sequential (Boosting): Reduces bias. Models are trained one after another to fix previous errors. Parallel Methods: Bagging Bagging (Bootstrap Aggregating) uses Bootstrapping—sampling data with replacement—to create multiple simulated datasets. The Bagging Procedure: Create multiple bootstrap samples. Train a weak learner on each. Aggregate the results: Regression: Take the average. Classification: Use Hard Voting (majority rule) or Soft Voting (averaging probabilities). Random Forests A Random Forest is Bagging applied to deep decision trees. It adds extra randomness by only showing each tree a random subset of features. This makes the model robust to missing data and prevents trees from being too similar. Sequential Methods: Boosting Boosting starts with simple models (like "stumps") and improves them iteratively. AdaBoost (Adaptive Boosting) AdaBoost increases the "weight" of misclassified points. The next model in the sequence is forced to focus on the mistakes made by the previous one. Gradient Boosting and XGBoost Instead of changing weights, each new model predicts the errors (residuals) of the one before it. XGBoost (Extreme Gradient Boosting): A highly optimized, fast version of this method. It is often the top-performing algorithm in data science competitions. Stacking Stacking uses a meta-model to combine different types of algorithms. Train several different base models (e.g., SVM, KNN, Tree). Use their predictions as input features for a final model. The meta-model (often a Logistic Regression) learns which base model to trust most for specific types of data.