SLM vs RAG - Choosing Your AI Approach скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: SLM vs RAG - Choosing Your AI Approach в качестве 4k

У нас вы можете посмотреть бесплатно SLM vs RAG - Choosing Your AI Approach или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон SLM vs RAG - Choosing Your AI Approach в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

SLM vs RAG - Choosing Your AI Approach

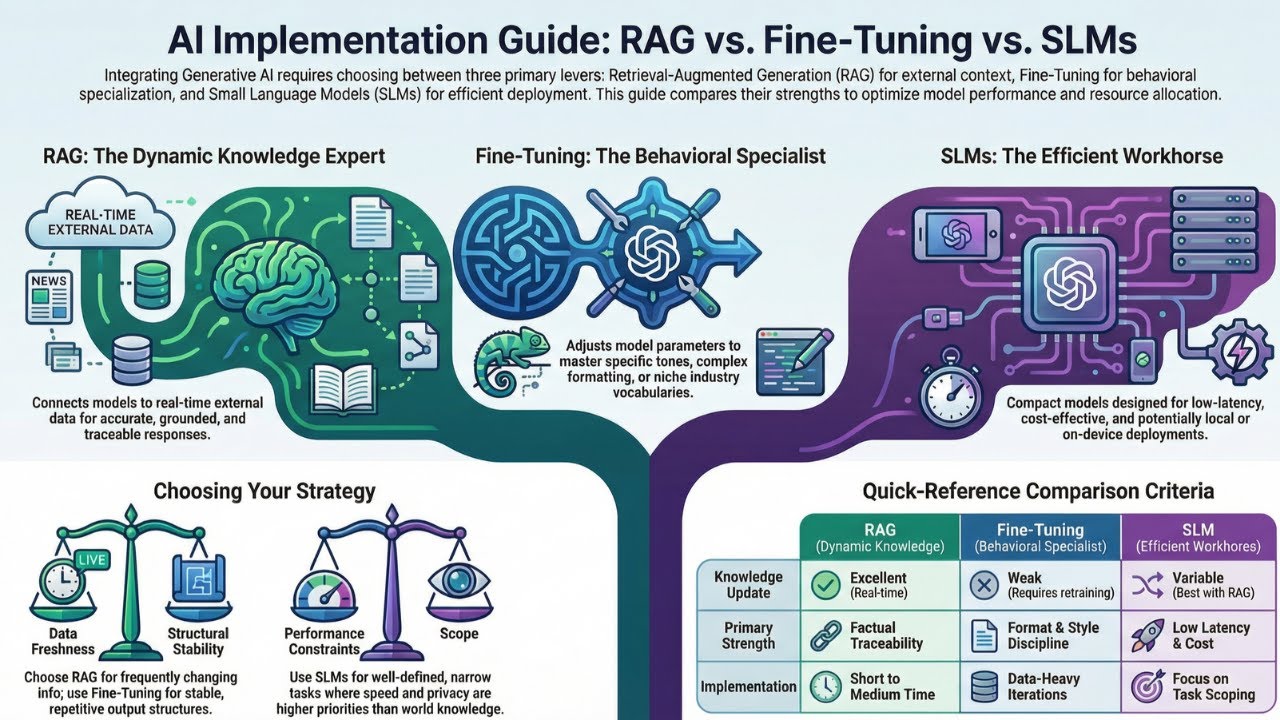

Bigger isn’t always better. In the race for AI efficiency, “Small” is the new “Smart.” 🚀 Are you struggling to choose between Finetuning, RAG, or Small Language Models (SLMs)? Most organizations overspend on massive LLMs when a more surgical approach would yield better results at a fraction of the cost. I’ve summarized the key strategies for navigating these three critical AI levers: 🔹 1. RAG (Retrieval-Augmented Generation): The "Source of Truth" Best for information that changes frequently. RAG pulls real-time data from external sources, providing traceability and citations while significantly reducing hallucinations. 🔹 2. Finetuning: The "Specialist" Best for mastering a specific tone, strict formatting, or repetitive procedures. This process adapts a model to follow a unique output structure by training it on a task-specific dataset. 🔹 3. SLM (Small Language Models): The "Budget Hero" Best for high-volume tasks and deployment on edge devices like mobile phones. SLMs change the economics of AI by offering faster inference and lower maintenance costs than "giant" models. 💡 The Pro Move: SLM + RAG For high-volume internal pilots, combining an SLM with a RAG pipeline provides contextual accuracy with minimal inference cost. Which approach is right for your project? • Choose RAG if your documents change often and you need a "source of truth". • Choose Finetuning if you need highly structured, stable, and disciplined outputs. • Choose SLM if latency, cost, and local deployment are your primary constraints. -------------------------------------------------------------------------------- Credits to NotebookLLM for the heavy lifting in synthesizing these insights. (Note: Information regarding NotebookLLM—likely referring to Google's NotebookLM—is not contained within the provided sources, and you may wish to independently verify its specific role in your workflow.) #AI #MachineLearning #LLM #SLM #RAG #GenerativeAI #Finetuning #TechStrategy #DataScience