Compute Optimal Scaling of Skills: Knowledge vs Reasoning | Nicholas Roberts скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Compute Optimal Scaling of Skills: Knowledge vs Reasoning | Nicholas Roberts в качестве 4k

У нас вы можете посмотреть бесплатно Compute Optimal Scaling of Skills: Knowledge vs Reasoning | Nicholas Roberts или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Compute Optimal Scaling of Skills: Knowledge vs Reasoning | Nicholas Roberts в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Compute Optimal Scaling of Skills: Knowledge vs Reasoning | Nicholas Roberts

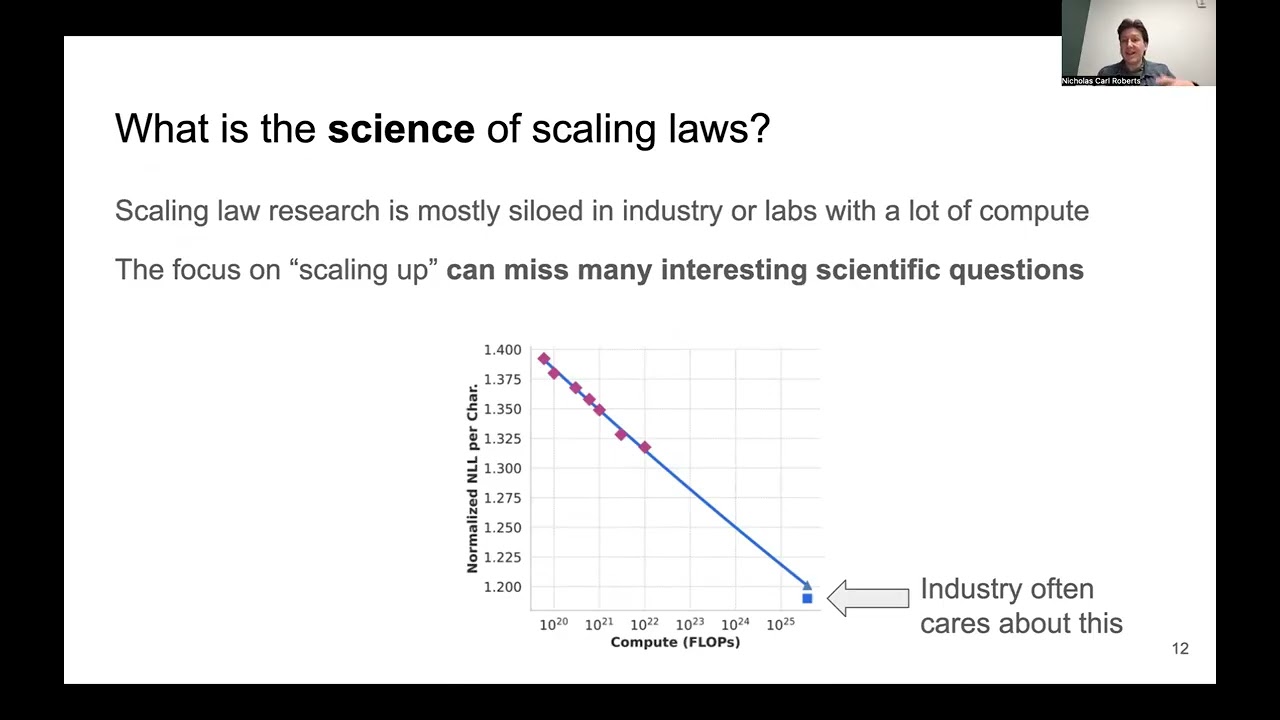

Speaker: Nicholas Roberts (University of Wisconsin–Madison) Abstract: Scaling laws are a critical component of the LLM development pipeline, most famously as a way to forecast training decisions such as 'compute-optimally' trading-off parameter count and dataset size, alongside a more recent growing list of other crucial decisions. In this work, we ask whether compute-optimal scaling behaviour can be skill-dependent. In particular, we examine knowledge and reasoning-based skills such as knowledge-based QA and code generation, and we answer this question in the affirmative: scaling laws are skill-dependent. Next, to understand whether skill-dependent scaling is an artefact of the pretraining datamix, we conduct an extensive ablation of different datamixes and find that, also when correcting for datamix differences, knowledge and code exhibit fundamental differences in scaling behaviour. We conclude with an analysis of how our findings relate to standard compute-optimal scaling using a validation set, and find that a misspecified validation set can impact compute-optimal parameter count by nearly 50%, depending on its skill composition. Bio: Nicholas Roberts is a Ph.D. candidate in Computer Science at University of Wisconsin–Madison, advised by Frederic Sala in the Sprocket Lab, where he works on the science of foundation model scaling, data-efficiency, and adaptation to high-impact scientific domains---all with the ultimate goal of developing powerful scientific research agents. He has completed research internships at Meta’s Llama team (working on scaling laws with Dieuwke Hupkes), Together AI (hybrid language models with Tri Dao), and Microsoft Research (Physics of AGI group with Sébastien Bubeck). He has received an honorable mention for the Jane Street Graduate Research Fellowship (2025) and was named an MLCommons Rising Star (2023). His academic path began at Fresno City College before earning his B.S. at UC San Diego where he worked with Sanjoy Dasgupta and Gary Cottrell and M.S. at Carnegie Mellon University with Ameet Talwalkar and Zack Lipton.