Algorithm arms race: Tim Cairns on Meta and TikTok’s outrage fuelled engagement. скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Algorithm arms race: Tim Cairns on Meta and TikTok’s outrage fuelled engagement. в качестве 4k

У нас вы можете посмотреть бесплатно Algorithm arms race: Tim Cairns on Meta and TikTok’s outrage fuelled engagement. или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Algorithm arms race: Tim Cairns on Meta and TikTok’s outrage fuelled engagement. в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

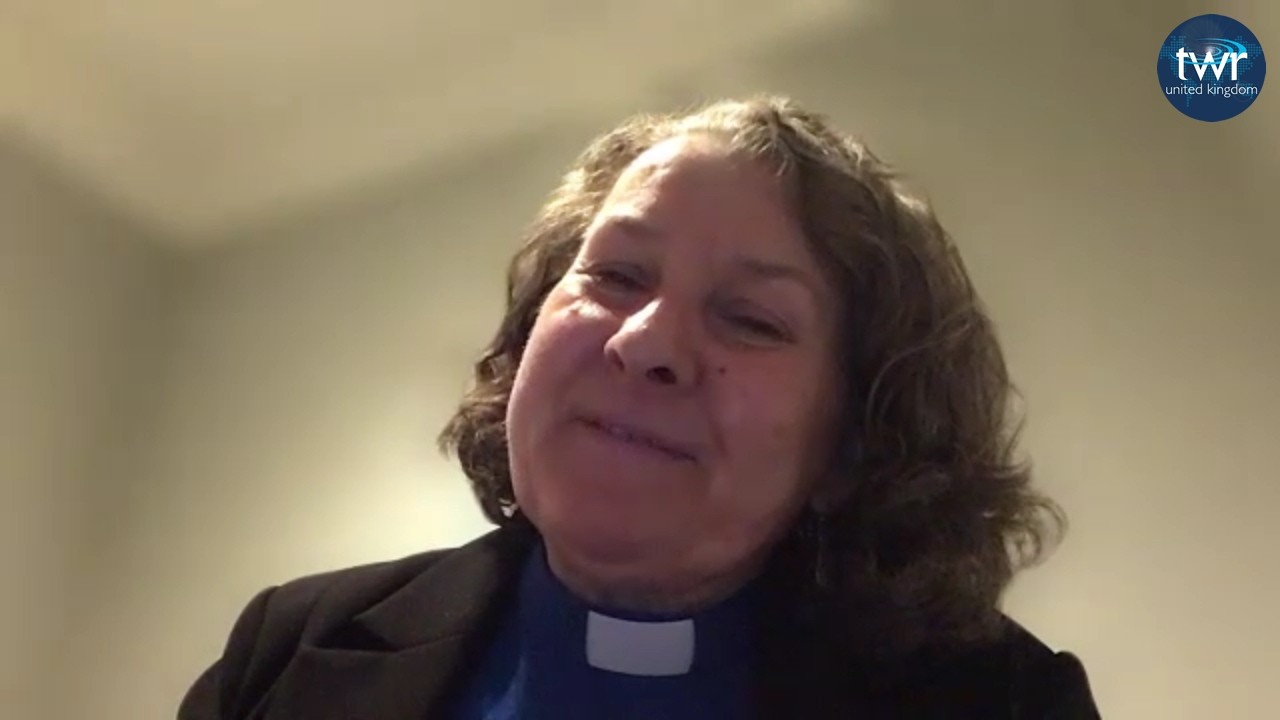

Algorithm arms race: Tim Cairns on Meta and TikTok’s outrage fuelled engagement.

A BBC investigation has uncovered claims that major social media platforms knowingly relaxed safety measures, allowing more harmful material to circulate in users’ feeds. More than a dozen whistleblowers and former insiders told the BBC that leading tech companies repeatedly prioritised engagement and growth over user protection, even when internal research showed their algorithms rewarded outrage. One former Meta engineer said senior leaders pushed teams to permit more “borderline” content — including misogynistic posts and conspiracy theories — in an effort to compete with TikTok. He claimed staff were told the shift was necessary because the company’s share price had fallen. At TikTok, an employee granted the BBC rare access to internal complaint dashboards and other evidence. They alleged that staff were instructed to fast‑track cases involving politicians while reports of harmful content featuring children waited in the queue. According to the whistleblower, decisions were shaped by a desire to keep influential political figures onside and avoid threats of regulation, rather than by concerns for user safety.