nn leakyrelu pytorch скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: nn leakyrelu pytorch в качестве 4k

У нас вы можете посмотреть бесплатно nn leakyrelu pytorch или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон nn leakyrelu pytorch в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

nn leakyrelu pytorch

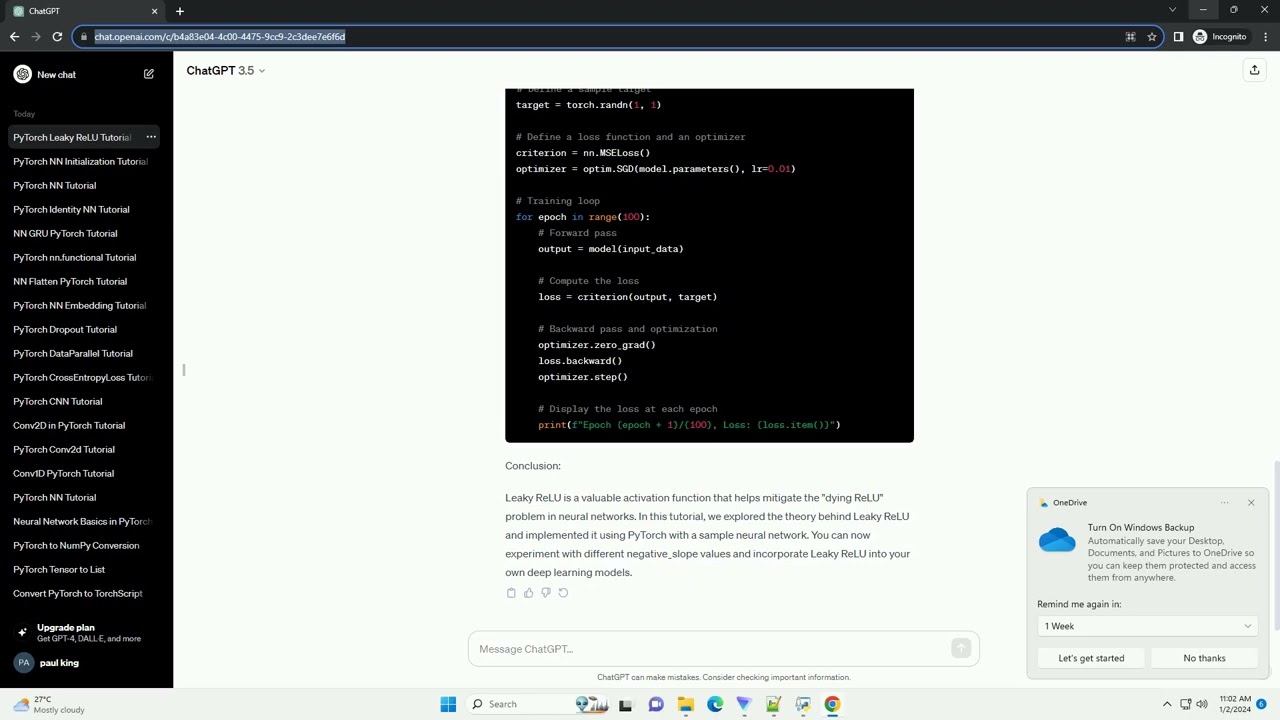

Download this code from https://codegive.com Title: Understanding and Implementing Leaky ReLU in PyTorch: A Comprehensive Tutorial Introduction: Rectified Linear Unit (ReLU) is a popular activation function used in neural networks to introduce non-linearity. However, traditional ReLU suffers from a drawback called "dying ReLU," where neurons can become inactive and stop learning. Leaky ReLU addresses this issue by allowing a small, non-zero gradient when the input is negative. In this tutorial, we'll explore Leaky ReLU and implement it using PyTorch, a popular deep learning library. Prerequisites: Make sure you have PyTorch installed. You can install it using: Understanding Leaky ReLU: Leaky ReLU is defined as: Leaky ReLU(x)={ x, negative_slope⋅x, if x0 otherwise Here, the "negative_slope" is a small positive value that determines how much the function leaks. Typically, a value like 0.01 is used. Implementation in PyTorch: Let's implement Leaky ReLU using PyTorch with a simple code example. In this example, we create a simple neural network with Leaky ReLU activation using the nn.LeakyReLU module. The negative_slope parameter is set to 0.01, but you can adjust it based on your requirements. Training the Model: To train the model, you can use standard PyTorch procedures with a suitable loss function and optimizer. Here's a brief example: Conclusion: Leaky ReLU is a valuable activation function that helps mitigate the "dying ReLU" problem in neural networks. In this tutorial, we explored the theory behind Leaky ReLU and implemented it using PyTorch with a sample neural network. You can now experiment with different negative_slope values and incorporate Leaky ReLU into your own deep learning models. ChatGPT