Hugging Face Transformers Pipeline Tokenizer Models Explained Step by Step скачать в хорошем качестве

huggingface transformers tutorial

huggingface pipeline explained

autotokenizer explained

huggingface models tutorial

zero shot classification huggingface

text generation huggingface

bert tokenizer explained

transformers library tutorial

nlp pipeline explained

deep learning huggingface

llm huggingface tutorial

how to use huggingface transformers

huggingface beginners tutorial

ai model pipeline tutorial

Switch 2 AI

huggingface ecosystem explained

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Hugging Face Transformers Pipeline Tokenizer Models Explained Step by Step в качестве 4k

У нас вы можете посмотреть бесплатно Hugging Face Transformers Pipeline Tokenizer Models Explained Step by Step или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Hugging Face Transformers Pipeline Tokenizer Models Explained Step by Step в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Hugging Face Transformers Pipeline Tokenizer Models Explained Step by Step

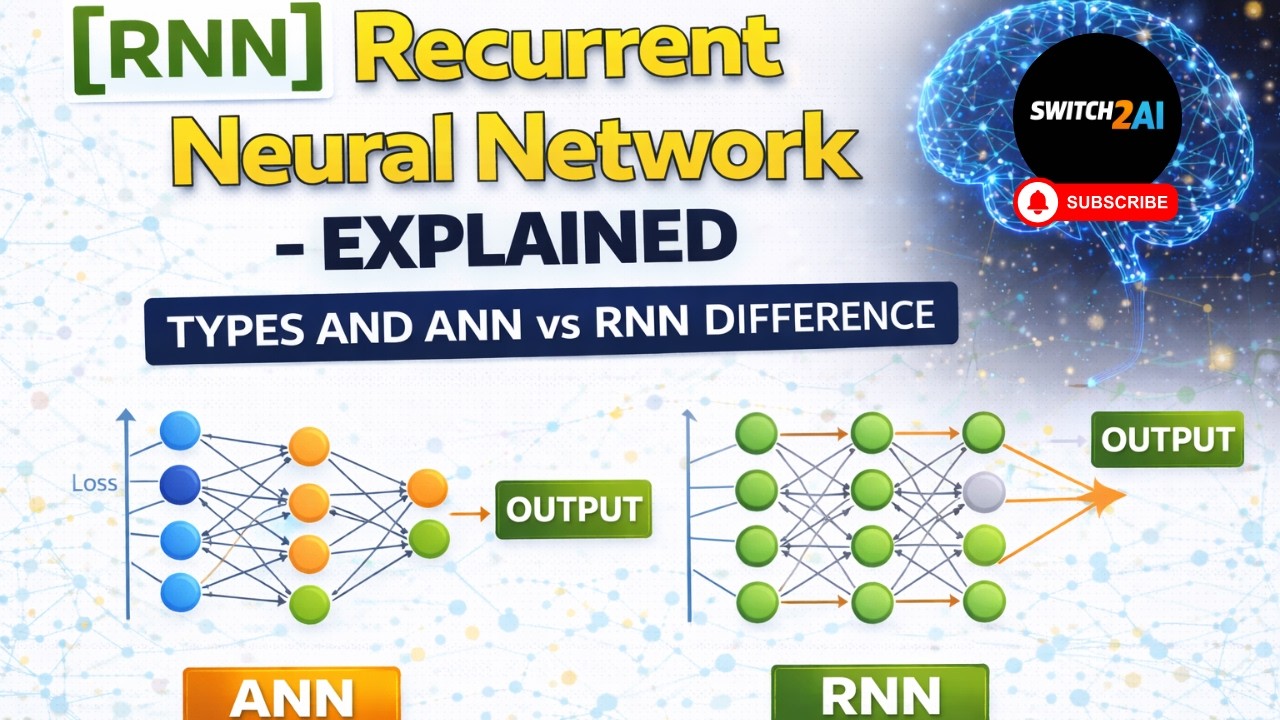

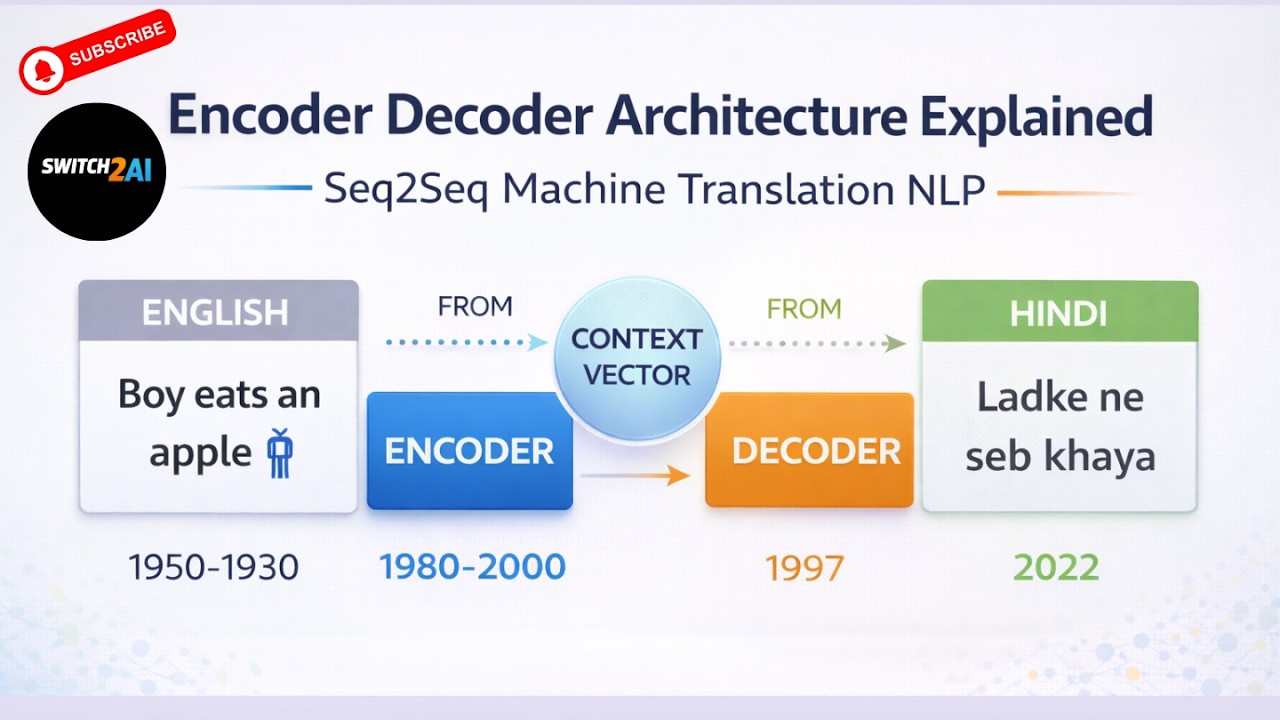

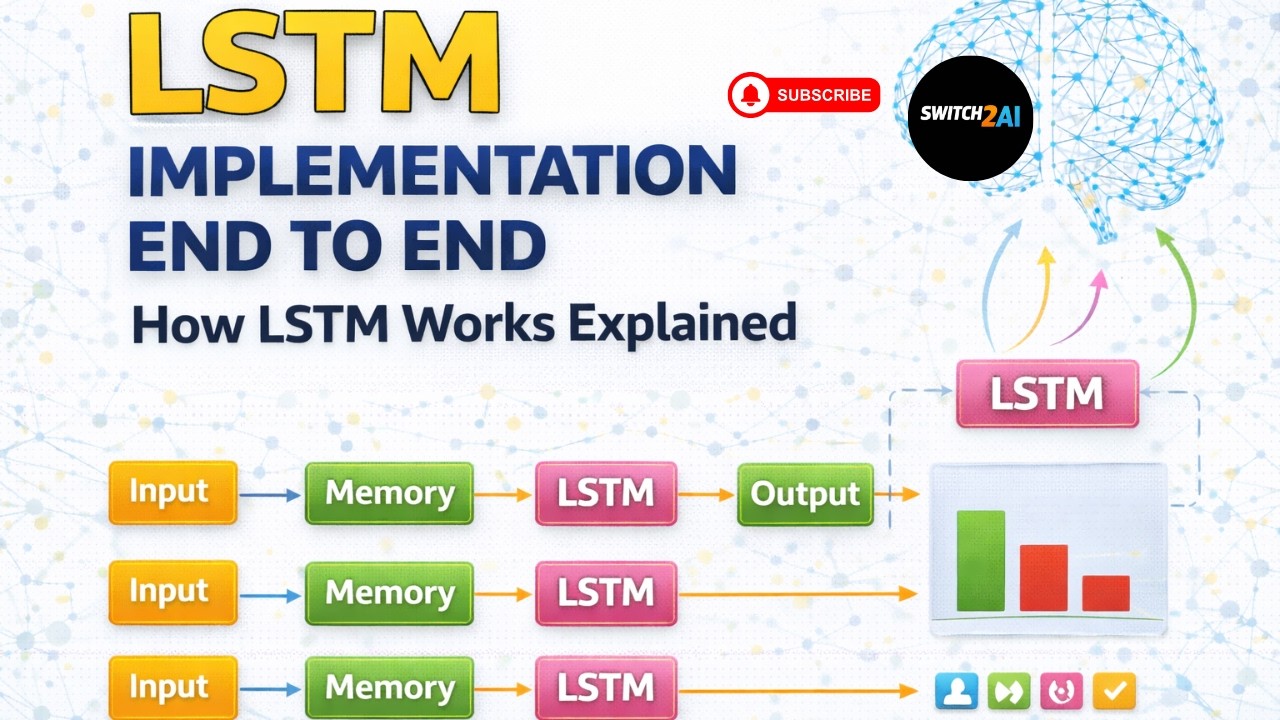

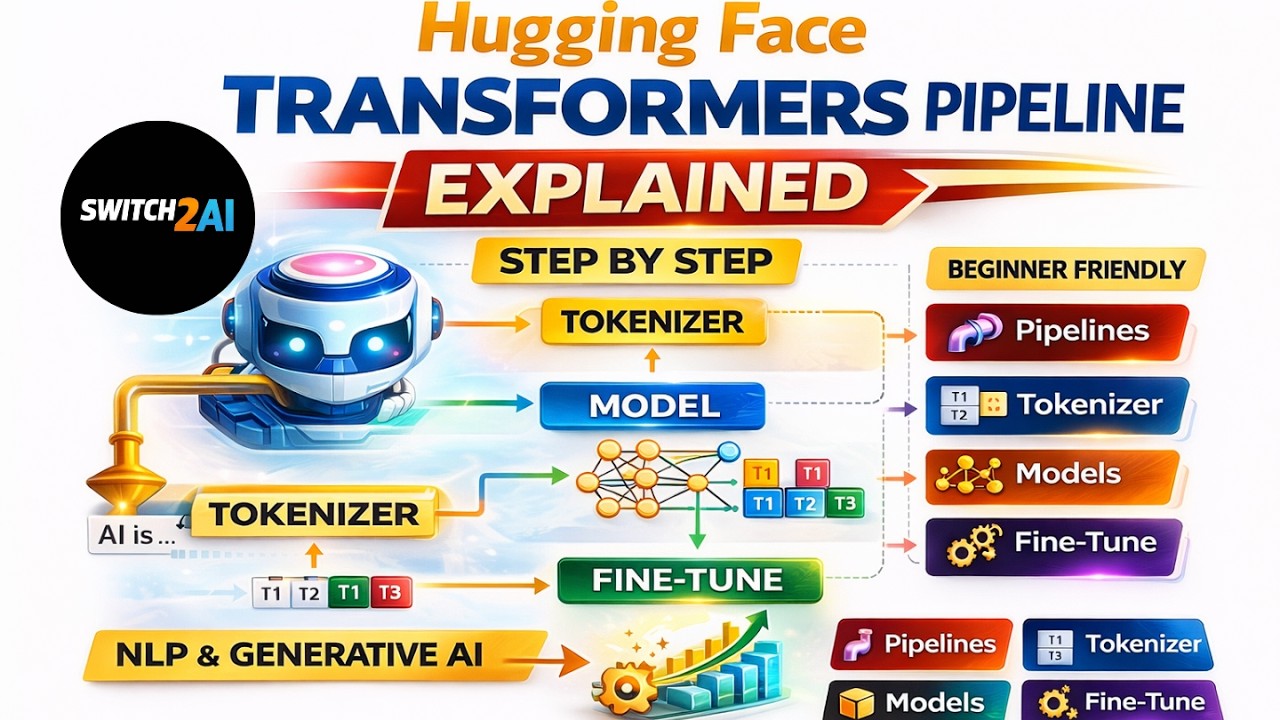

In this video, we explore the Hugging Face ecosystem and understand how to use Transformers, pipelines, tokenizers, and pre-trained models step by step. This is a very important video if you want to build real world NLP and Generative AI applications. Here is the GitHub repo link https://github.com/switch2ai You can download all the code, scripts, and documents from the above GitHub repository. Hugging Face Website https://huggingface.co Hugging Face Ecosystem Models Pre-trained models for NLP, computer vision, and audio tasks Datasets Large collection of ready to use datasets Spaces Deploy and share ML apps Inference API Run models without local setup Libraries Transformers Datasets Gradio Accelerate Bitsandbytes PEFT TRL Transformers Library Install and import pip install transformers This library provides easy access to thousands of pre-trained models and utilities. Pipeline Pipeline is a high level API that hides all intermediate steps such as preprocessing, tokenization, padding, model inference, and post processing. Flow Text → Preprocessing → Tokenization → Padding → Model → Output Label Example Sentiment Analysis You can directly use a pre-trained model sentiment analysis pipeline It can classify text like This is good product This is bad product Batch predictions are also supported You can pass multiple sentences at once and get predictions easily Text Generation and Summarization You can generate or summarize long text using models like T5 This allows you to convert long paragraphs into shorter meaningful summaries Zero Shot Classification You can classify text into categories without training Example Input my credit card is not working Labels loan, credit card, services, others The model will automatically pick the most relevant category AutoTokenizer Tokenizer converts text into the format expected by the model Example Input text is converted into input ids Each token gets a unique id It also generates attention mask Attention mask tells which tokens should be considered by the model You can also convert ids back to tokens Tokenizer can be saved locally and reused later If not available locally, it will be downloaded from Hugging Face Important Parameters padding Used to make all sequences same length truncation Used to limit sequence length return_tensors pt for PyTorch np for NumPy Model Loading You can load pre-trained models using AutoModel Models are downloaded from Hugging Face if not present locally These models are already trained on large datasets and can be directly used for tasks like classification, generation, and embedding Why Hugging Face is Important Easy access to thousands of models Reduces development time Supports production ready pipelines Strong open source community Mock Syllabus Covered Encoder Decoder Attention Mechanism Transformer Architecture Advanced Attention like MQA and GQA By the end of this video, you will be able to use Hugging Face pipelines, tokenizers, and models to build real world AI applications. Channel Name Switch 2 AI Hashtags #HuggingFace #Transformers #Pipeline #AutoTokenizer #NLP #DeepLearning #MachineLearning #LLM #AI #Switch2AI SEO Tags huggingface transformers tutorial huggingface pipeline explained autotokenizer explained huggingface models tutorial zero shot classification huggingface text generation huggingface bert tokenizer explained transformers library tutorial nlp pipeline explained deep learning huggingface llm huggingface tutorial how to use huggingface transformers huggingface beginners tutorial ai model pipeline tutorial Switch 2 AI SEO Tags (500 characters comma separated) huggingface transformers tutorial,huggingface pipeline explained,autotokenizer explained,huggingface models tutorial,zero shot classification huggingface,text generation huggingface,bert tokenizer explained,transformers library tutorial,nlp pipeline explained,deep learning huggingface,llm huggingface tutorial,how to use huggingface transformers,huggingface beginners tutorial,ai model pipeline tutorial,Switch 2 AI,huggingface ecosystem explained