2. How LLMs Actually Work | Tokenization, Prediction & Post Processing Explained скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: 2. How LLMs Actually Work | Tokenization, Prediction & Post Processing Explained в качестве 4k

У нас вы можете посмотреть бесплатно 2. How LLMs Actually Work | Tokenization, Prediction & Post Processing Explained или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон 2. How LLMs Actually Work | Tokenization, Prediction & Post Processing Explained в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

2. How LLMs Actually Work | Tokenization, Prediction & Post Processing Explained

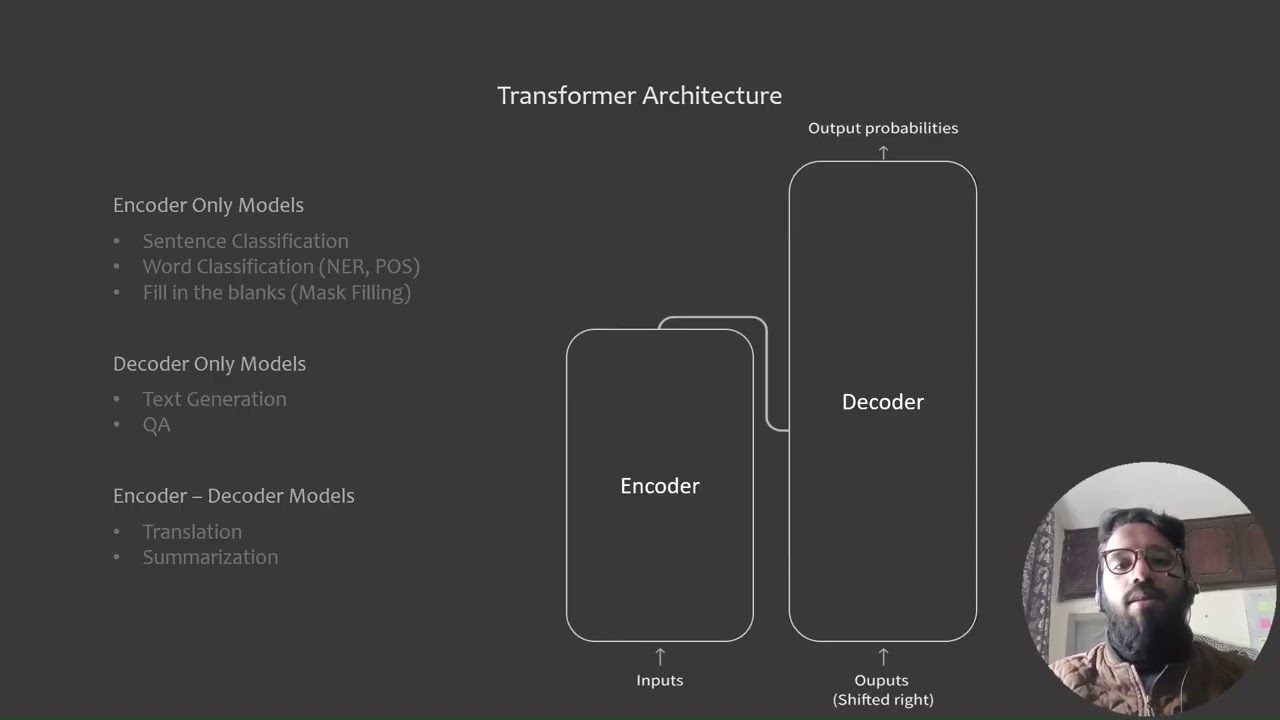

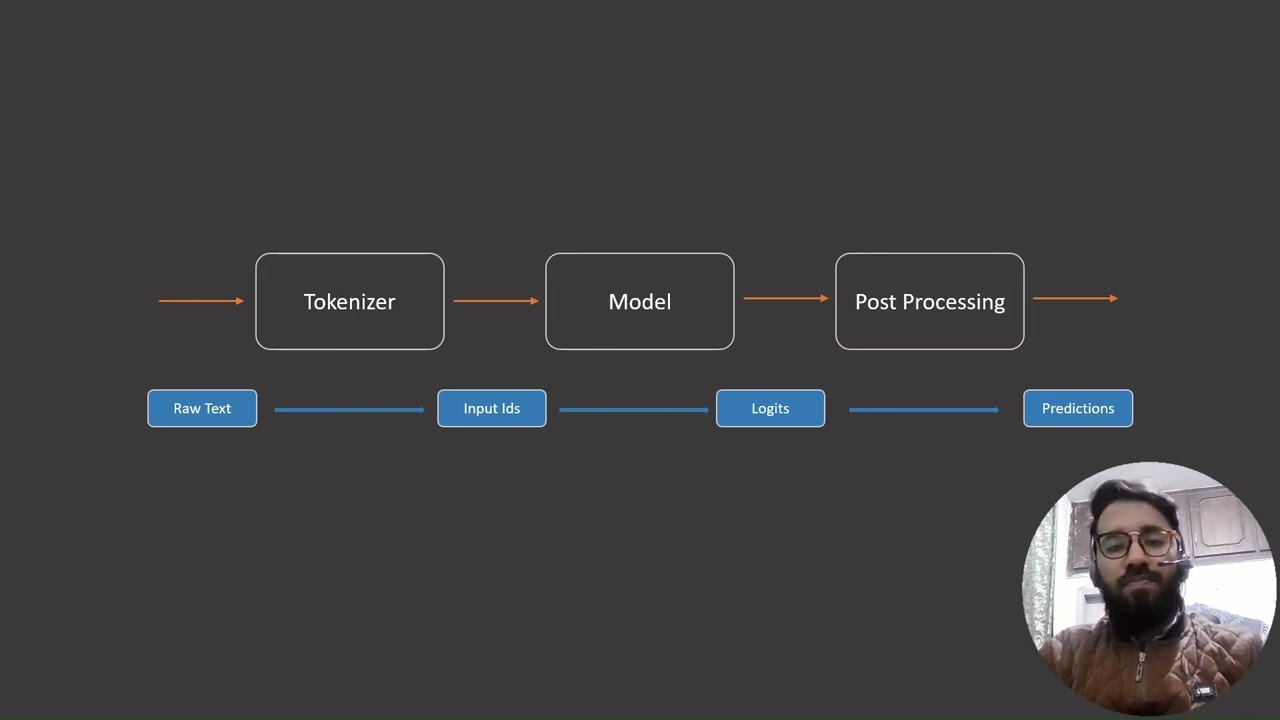

Ever wondered what actually happens when you type something into ChatGPT and it responds? In this video we go through the full working pipeline of an LLM — step by step. Here's what we cover: 🔤 Tokenization — how text gets broken down before the model even sees it, with a hands-on demo 🧠 Prediction — how the model decides what comes next, with a hands-on demo ✂️ Post Processing — how raw model output gets cleaned and shaped into what you actually read, with a hands-on demo Every stage has its own Colab walkthrough so you can follow along and see it yourself. #llms #tokenization #ai #study #course #howto #explained #aiagents Chapters: 00:00 - 1:13 - Intro 1:14 - 1:54 - Pre Processing: Tokenization 1:55 - 4:03 - Tokenization Code demonstration 4:04 - 5:53 - Inference: Making predictions 5:54 - 7:58 - Inference: Code demonstration 7:59 - 9:04 - Post Processing: Making sense of predictions 9:05 - 10:51 - Post Processing: Code demonstration This is Part 2 of the LLMs series. Make sure you've caught Part 1 on Transformer Architecture first 👆 🔔 Subscribe to keep up with the rest of the series 👍 Like if this made something click for you 📩 Want to go deeper or need guidance? Open to mentorship & consultations. linkedin.com/in/muhammad-abdullah-5a8493187

![[LIVE] Bez litości. Jan Piński, Tomasz Szwejgiert i Tomasz Wiejski na żywo](https://imager.clipsaver.ru/UxbyXMYMR6w/max.jpg)