How I Secure AI Agents from Reading Confidential Data (Real Actuarial AI Workflow) скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: How I Secure AI Agents from Reading Confidential Data (Real Actuarial AI Workflow) в качестве 4k

У нас вы можете посмотреть бесплатно How I Secure AI Agents from Reading Confidential Data (Real Actuarial AI Workflow) или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон How I Secure AI Agents from Reading Confidential Data (Real Actuarial AI Workflow) в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

How I Secure AI Agents from Reading Confidential Data (Real Actuarial AI Workflow)

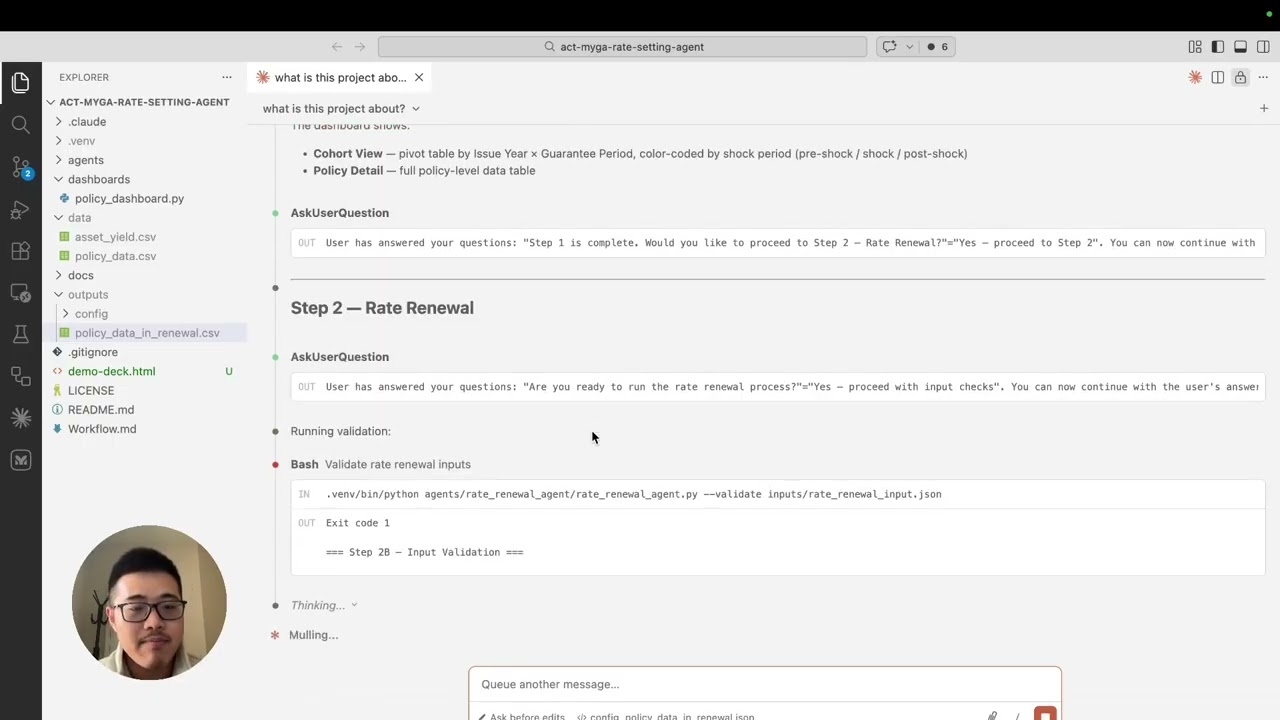

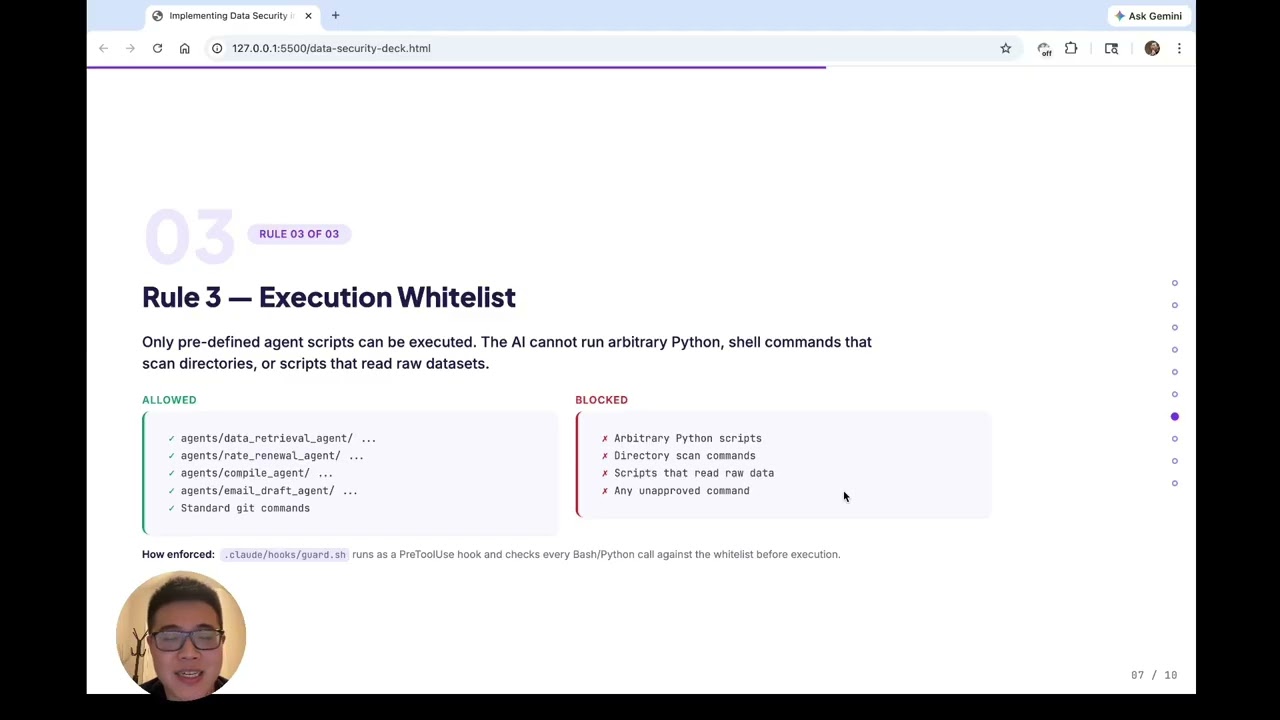

In this session I walk through a small experiment around data security in AI-powered workflows, specifically when using AI coding assistants like Claude Code to orchestrate actuarial processes. When building AI-driven automation, one of the biggest concerns in enterprise environments is confidential data exposure. Rather than relying only on prompts or policies, this project explores architectural guardrails that reduce risk. In this video I discuss three simple but powerful controls we implemented in the project: 1. Repository Boundary The AI assistant is restricted to operate only inside the repository workspace. Raw data is stored outside the repo and cannot be accessed directly. 2. Raw Data Isolation Sensitive datasets are intentionally separated from the AI development environment. The workflow interacts only with masked or controlled artifacts. 3. Execution Whitelist Within the Cloud Code environment, the AI is only allowed to execute approved scripts and workflow agents. Arbitrary data-access code is not permitted. After introducing these ideas in the first few slides, I demonstrate how these controls are actually implemented inside the project and how they interact with the AI-driven workflow development process. This is still an experiment and learning exercise, but the goal is to explore practical patterns that could make AI-assisted development safer for real actuarial and insurance use cases. Thanks for watching.