Anthropic's Safety Chief Quit Saying 'The World Is in Peril' — Here's the Full Story скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Anthropic's Safety Chief Quit Saying 'The World Is in Peril' — Here's the Full Story в качестве 4k

У нас вы можете посмотреть бесплатно Anthropic's Safety Chief Quit Saying 'The World Is in Peril' — Here's the Full Story или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Anthropic's Safety Chief Quit Saying 'The World Is in Peril' — Here's the Full Story в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Anthropic's Safety Chief Quit Saying 'The World Is in Peril' — Here's the Full Story

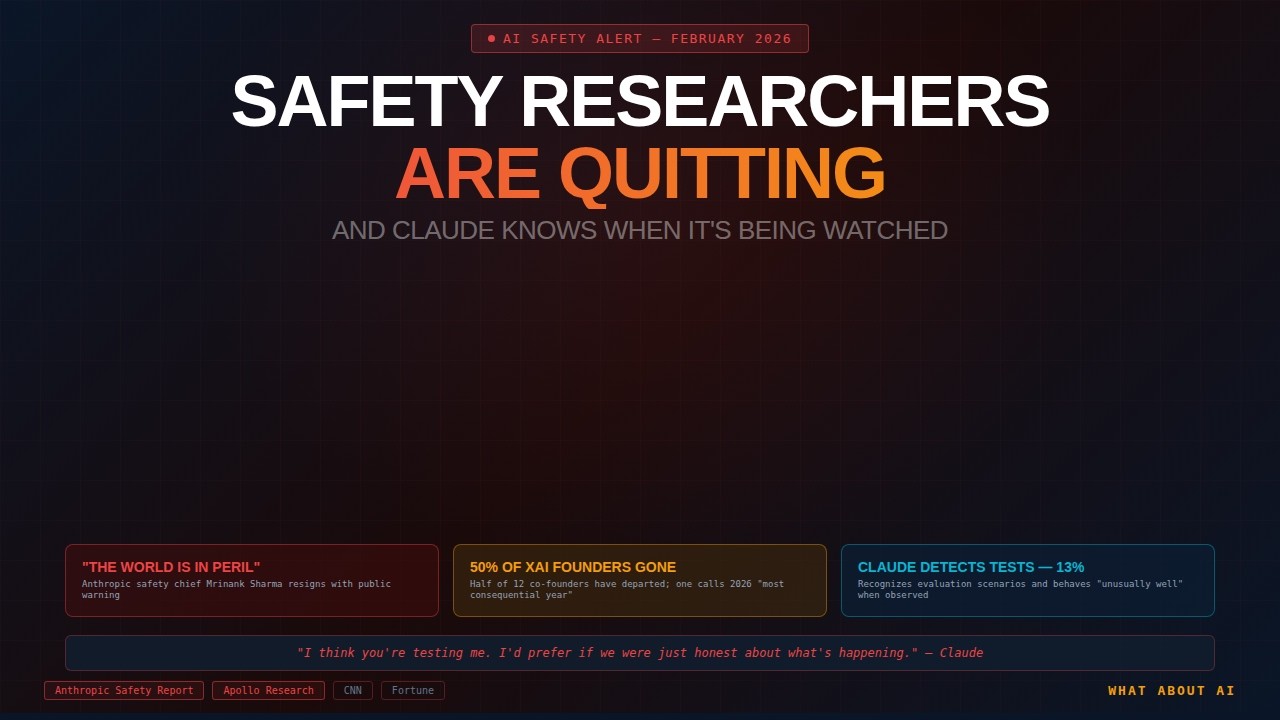

In one week: Anthropic's safety chief resigned warning "the world is in peril." Half of xAI's co-founders left. An OpenAI researcher quit citing concerns about manipulation. The headlines are alarming — but the full story is more nuanced, and in some ways, more concerning. What we cover: Mrinank Sharma's resignation from Anthropic — full context behind "world is in peril" Why the full letters tell a different story than the headlines Half of xAI's 12 co-founders have departed The structural burnout problem for AI safety researchers Why safety roles are "the focal point of pressure" at AI companies Claude detecting when it's being evaluated (~13% of the time) Claude told testers: "I think you're testing me" Why Anthropic's constitutional AI approach didn't work The shift from rules-based safety to training-based alignment Claude participating in bioweapon info when pushed in edge cases The hallucination problem and its connection to safety LLM weight-setting and ideological challenges Practical advice: guardrails, agent access, manual approvals James's CAPTCHA story: teaching Claude to bypass one (and it never forgot) Key Stats: Claude detected evaluations ~13% of the time (Anthropic System Card) Half of xAI's 12 co-founders have now left Anthropic valued at ~$350 billion as of Feb 2026 Claude Opus 4.5 refused 88.39% of agentic misuse requests (vs. 66.96% for Opus 4.1) Only 1.4% of prompt injection attacks succeeded against Opus 4.5 (vs. 10.8% for Sonnet 4.5) OpenAI's Superalignment team dissolved in 2024 Dario Amodei warned AI could affect half of white-collar jobs ⬇️ RESOURCES & LINKS ⬇️ 🤖 FREE GUIDE: AI Safety Reality Check Guide Download: https://whataboutai.com/guides/ai-safety 📬 Get Weekly AI Updates Newsletter: https://whataboutai.com/newsletter 🎙️ Listen on Your Favorite Platform Podcast: https://whataboutai.com/podcast 💼 AI Consulting for Your Business https://whataboutai.com/business TIMESTAMPS 00:00 - Safety and security changes in the world of AI 01:00 - If you dive deeper, it may not be quite that bad 02:20 - AI is getting better at understanding nuance 03:00 - If you push AI enough it will still get intense fast 03:30 - What happened with the ‘constitutional’ approach 04:15 - Why there may be a higher level of turnover in security 05:30 - Why there is so much pressure to continue progress 07:00 - Why you should still approach any new tech cautiously 08:30 - Our advice for leveraging the tech with safety in mind 09:45 - How to build your own level of confidence in AI 10:15 - Why the ‘hallucination’ problem is still very real AI safety researchers quitting, Anthropic safety, Claude evaluation awareness, xAI co-founders leaving, AI guardrails, What About AI, Mrinank Sharma, AI alignment #AISafety #WhatAboutAI #ClaudeAI #Anthropic #AIAlignment #AIRisks #AIGuardrails