Lipschitz Regularization of Neural Networks - Intriguing Properties of Neural Networks скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Lipschitz Regularization of Neural Networks - Intriguing Properties of Neural Networks в качестве 4k

У нас вы можете посмотреть бесплатно Lipschitz Regularization of Neural Networks - Intriguing Properties of Neural Networks или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Lipschitz Regularization of Neural Networks - Intriguing Properties of Neural Networks в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Lipschitz Regularization of Neural Networks - Intriguing Properties of Neural Networks

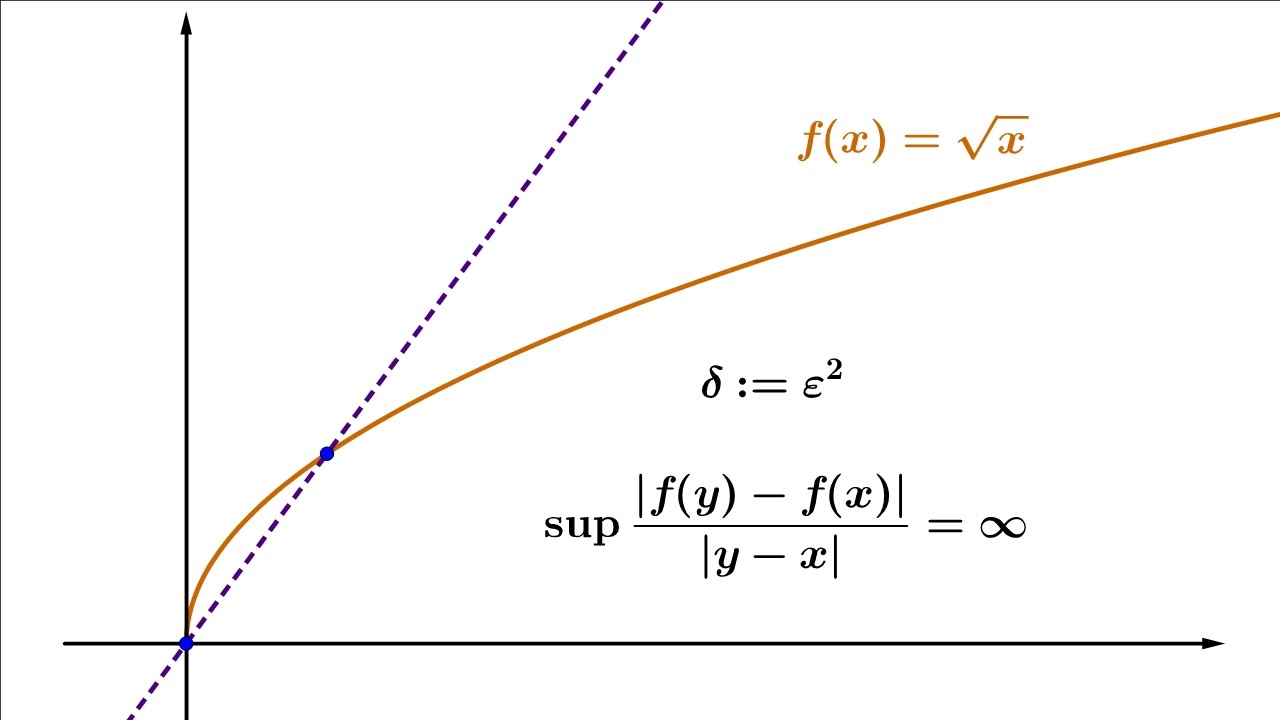

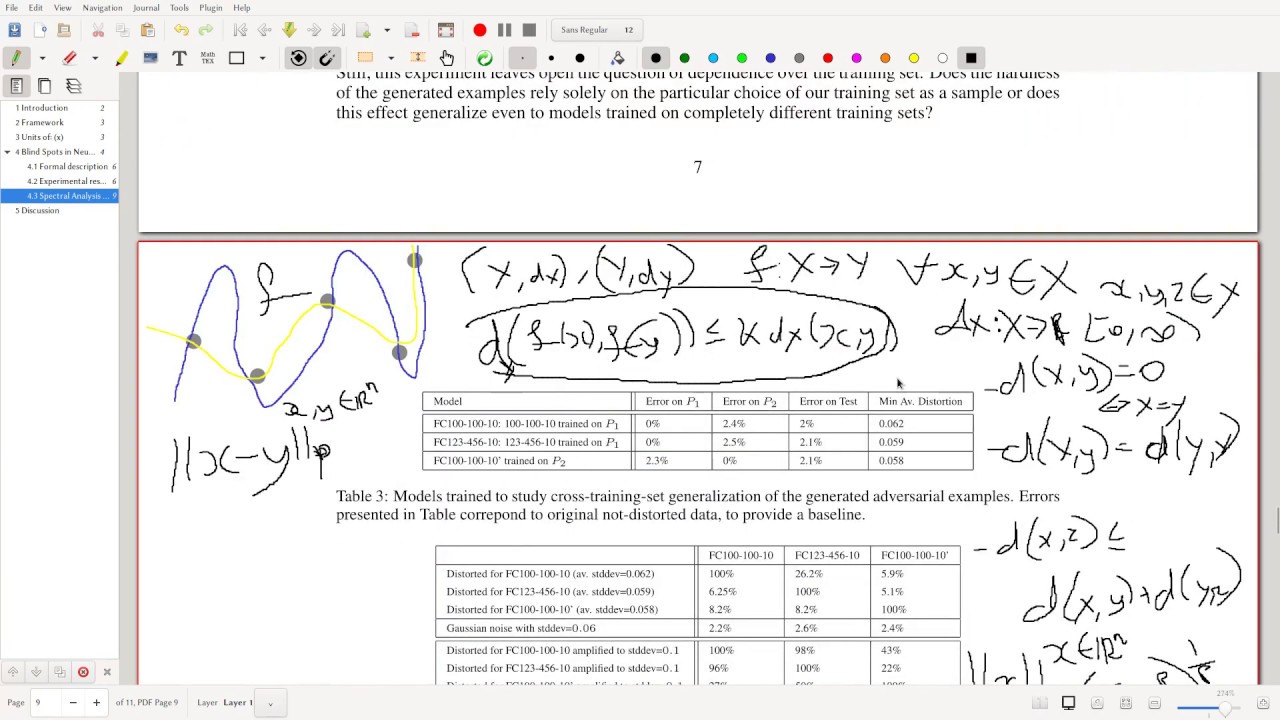

In this video we discuss Lipschitz continuity as a metric for neural network stability to continue with our discussion of robustness to adversarial samples. Paper: https://arxiv.org/abs/1312.6199 Abstract: Deep neural networks are highly expressive models that have recently achieved state of the art performance on speech and visual recognition tasks. While their expressiveness is the reason they succeed, it also causes them to learn uninterpretable solutions that could have counter-intuitive properties. In this paper we report two such properties.First, we find that there is no distinction between individual high level units and random linear combinations of high level units, according to various methods of unit analysis. It suggests that it is the space, rather than the individual units, that contains of the semantic information in the high layers of neural networks.Second, we find that deep neural networks learn input-output mappings that are fairly discontinuous to a significant extend. Specifically, we find that we can cause the network to misclassify an image by applying a certain imperceptible perturbation, which is found by maximizing the network’s prediction error. In addition,the specific nature of these perturbations is not a random artifact of learning: the same perturbation can cause a different network, that was trained on a different subset of the dataset, to misclassify the same input