Follow the Gradient: A Tour of Neural Network Theory, by Prof. Nicolas Flammarion скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Follow the Gradient: A Tour of Neural Network Theory, by Prof. Nicolas Flammarion в качестве 4k

У нас вы можете посмотреть бесплатно Follow the Gradient: A Tour of Neural Network Theory, by Prof. Nicolas Flammarion или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Follow the Gradient: A Tour of Neural Network Theory, by Prof. Nicolas Flammarion в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Follow the Gradient: A Tour of Neural Network Theory, by Prof. Nicolas Flammarion

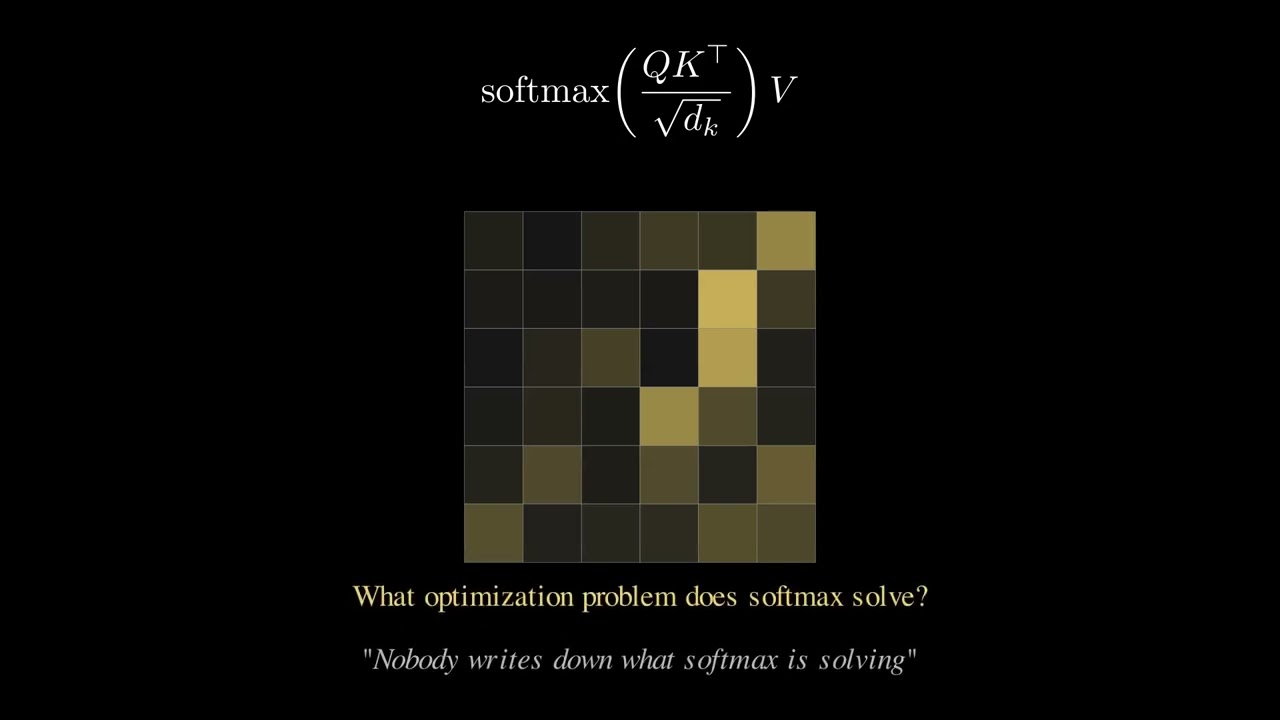

Inaugural Lecture - Follow the Gradient: A Tour of Neural Network Theory Abstract With ChatGPT and the latest advances in Large Language Models, artificial intelligence is the talk of the town. However, the theoretical foundations of such large machine learning models remain unclear. In this talk, I discuss recent results that shed light on one of the mysteries behind this success: why gradient methods converge to models that generalize well. We begin our journey by discussing simple linear regression, then move on to explore one-hidden-layer neural networks, and finally, investigate similar behavior in deep neural networks. About the speaker Nicolas Flammarion is a tenure-track assistant professor in computer science at EPFL. Prior to that, he was a postdoctoral fellow at UC Berkeley, hosted by Michael I. Jordan. He received his PhD in 2017 from Ecole Normale Supérieure in Paris, where he was advised by Alexandre d’Aspremont and Francis Bach. In 2018 he received the Fondation Mathématique Jacques Hadamard prize for the best PhD thesis in the field of optimization and in 2021 a NeurIPS Outstanding Paper Award. His research focuses primarily on learning problems at the interface of machine learning, statistics and optimization.

![[AI Unplugged] - Erika Gutierrez: Revolutionizing Disaster Relief with AI](https://imager.clipsaver.ru/-86K3slCUwk/max.jpg)