RNN Architectures Explained — Seq2Seq, Seq2Vec, Encoder Decoder & Deep RNNs скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: RNN Architectures Explained — Seq2Seq, Seq2Vec, Encoder Decoder & Deep RNNs в качестве 4k

У нас вы можете посмотреть бесплатно RNN Architectures Explained — Seq2Seq, Seq2Vec, Encoder Decoder & Deep RNNs или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон RNN Architectures Explained — Seq2Seq, Seq2Vec, Encoder Decoder & Deep RNNs в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

RNN Architectures Explained — Seq2Seq, Seq2Vec, Encoder Decoder & Deep RNNs

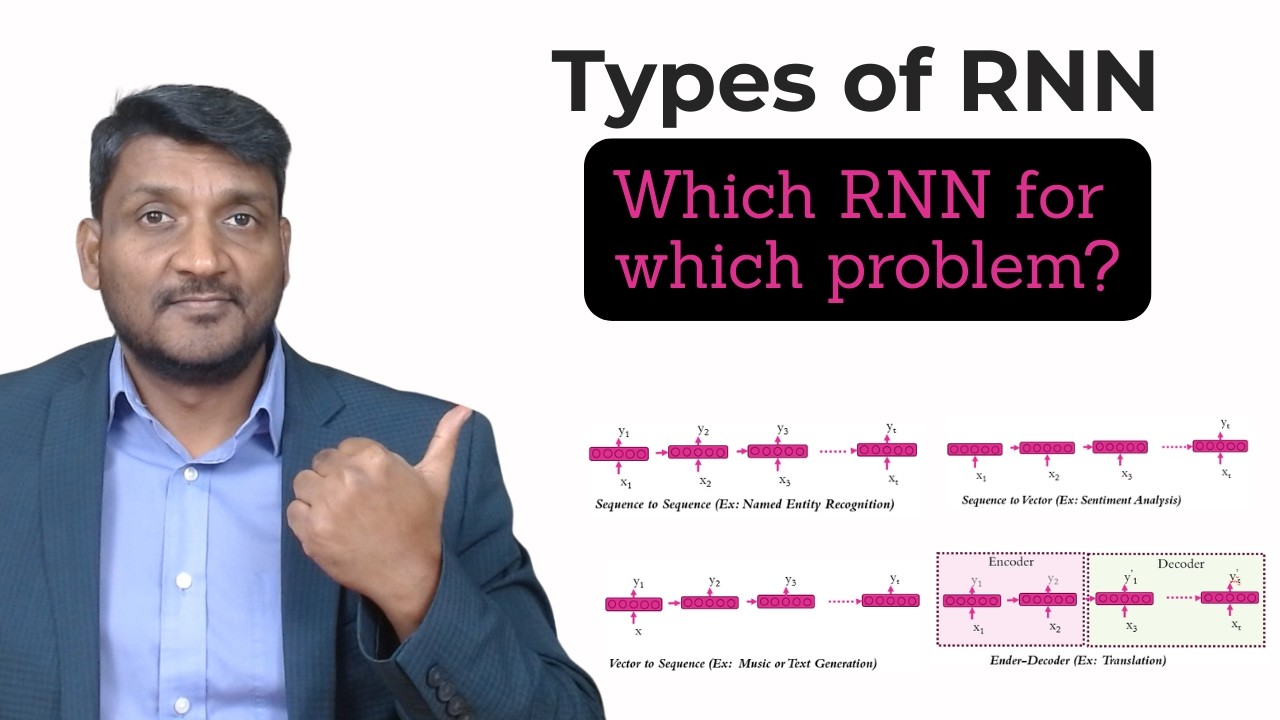

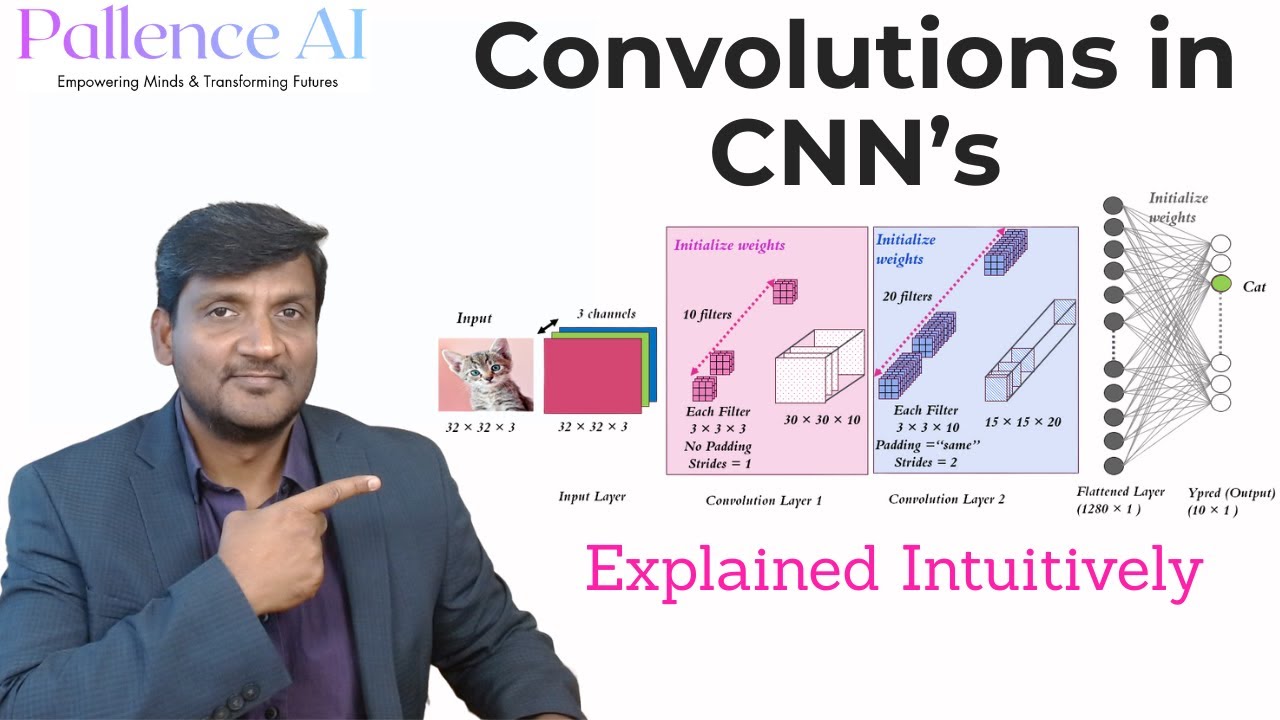

In this video, we explore the different types of Recurrent Neural Network architectures — and more importantly, which architecture to use depending on the problem you're solving. Because here's the thing — not all sequence problems are the same. Predicting sentiment from a movie review is a fundamentally different problem from translating a sentence from English to Spanish. Or generating text from a single word. Each problem requires a different RNN structure — and understanding those differences is what separates someone who just knows RNNs exist from someone who can actually apply them. In this video we cover all the major RNN architecture types clearly — with diagrams — so you can see the difference, not just hear about it: Sequence to Sequence — for tasks like Named Entity Recognition, where every token in the input needs a corresponding output Sequence to Vector — for tasks like Sentiment Analysis, where you read an entire sequence and predict a single output at the end Vector to Sequence — for tasks like Text and Music Generation, where a single input generates an entire sequence Encoder Decoder — for tasks like Language Translation, where one sequence is encoded and a completely new sequence is decoded Deep RNNs — stacking multiple RNN layers for more powerful sequence learning Hybrid CNN + RNN architectures — combining local feature extraction with sequential memory By the end of this video you'll have a complete mental map of how RNNs can be structured for different real-world AI problems — and you'll understand why this foundation is essential before moving into LSTMs and Transformers. 🎓 This video is part of the Deep Learning Mastery playlist — a structured, concept-by-concept journey from AI fundamentals all the way to modern architectures like Transformers and LLMs. 👉 Watch the full playlist here: • Deep Learning Mastery 📌 Whether you're a university student, a data scientist, or an ML/AI engineer building toward a real understanding of modern AI — this is a concept you don't want to skip. 🔔 Subscribe to Pallence AI for clear, honest, in-depth explanations of AI and Deep Learning — from fundamentals to cutting edge. ⏱️ Timestamps: 00:00 — Introduction 01:22 — Different types of RNN 04:50 — Simple RNN with one hidden unit 07:47 — Simple RNN with multiple hidden units 09:47 — Deep RNN 10:27 — Deep RNN (Sequence to Sequence) 10:59 — Deep RNN & Hybrid CNN-RNN 11:25 — Closing & What's Next #RNN #RecurrentNeuralNetworks #DeepLearning #MachineLearning #SequenceModels #Seq2Seq #EncoderDecoder #NLP #AIEngineering #Transformers #PallenceAI #ArtificialIntelligence #DataScience #NeuralNetworks

![Как представить 10 измерений? [3Blue1Brown]](https://imager.clipsaver.ru/tCIARwH01Ac/max.jpg)