Why Language Models Hallucinate (Explained by OpenAI) скачать в хорошем качестве

Повторяем попытку...

Скачать видео с ютуб по ссылке или смотреть без блокировок на сайте: Why Language Models Hallucinate (Explained by OpenAI) в качестве 4k

У нас вы можете посмотреть бесплатно Why Language Models Hallucinate (Explained by OpenAI) или скачать в максимальном доступном качестве, видео которое было загружено на ютуб. Для загрузки выберите вариант из формы ниже:

-

Информация по загрузке:

Скачать mp3 с ютуба отдельным файлом. Бесплатный рингтон Why Language Models Hallucinate (Explained by OpenAI) в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием видео, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса ClipSaver.ru

Why Language Models Hallucinate (Explained by OpenAI)

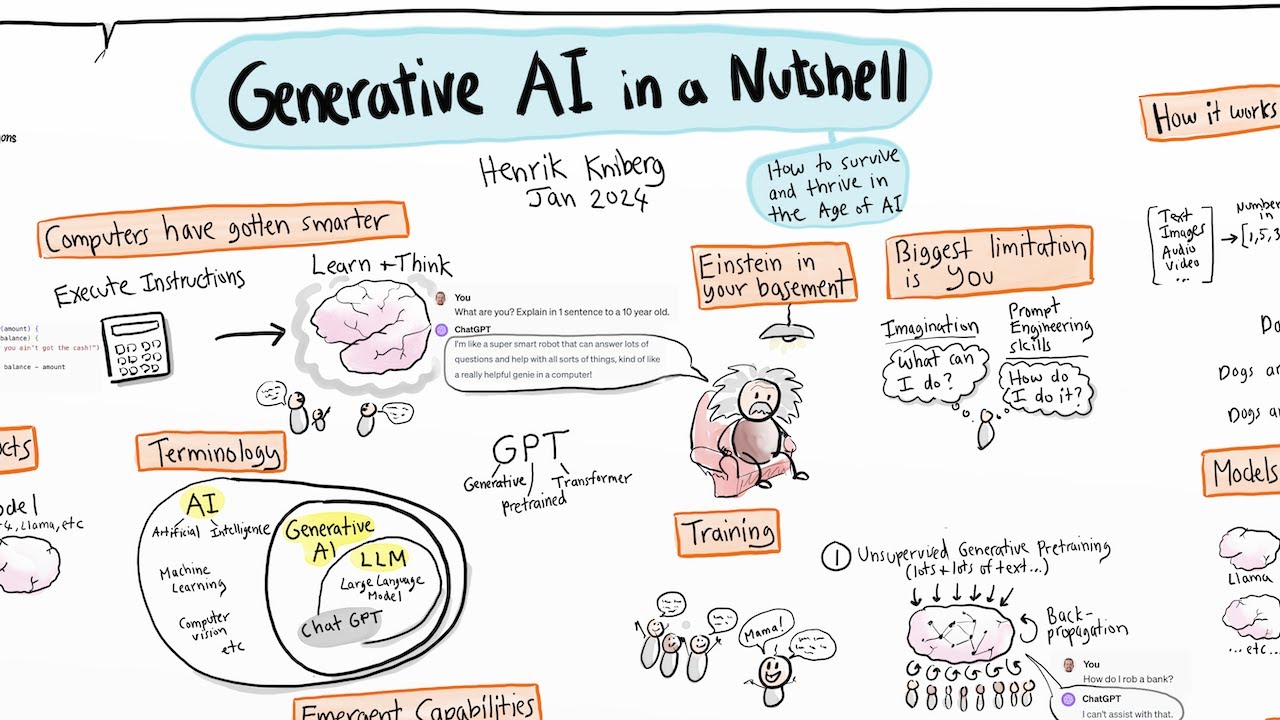

Ever wondered why ChatGPT or Gemini sometimes confidently lies? 🤔 This video breaks down one of the most important papers of 2025, “Why Language Models Hallucinate” by Adam Tauman Kalai and colleagues at OpenAI & Georgia Tech. 🔥 In this episode, we explore: How AI “hallucinations” aren’t mysterious bugs but statistical inevitabilities ⚙️ Why training and evaluation methods actually reward guessing over honesty 📊 The math behind how language models confuse plausibility with truth 🧮 Why even the smartest models act like overconfident students taking an exam! 🎓 And the surprising fix — not a new model, but a new way to grade AI answers 🧠✅ If you care about trustworthy AI, this is a must-watch. We’ll unpack the theory, show real examples, and explain how changing our benchmarks could finally make AI say “I don’t know” — when it really doesn’t know. 📚 Paper: Kalai, Nachum, Vempala, Zhang (2025) – “Why Language Models Hallucinate” #AI #MachineLearning #DeepLearning #ArtificialIntelligence #LargeLanguageModels #LLMs #OpenAI #NeuralNetworks #AIEthics #AIEducation #AIHallucination #AITrust #AIFacts #AIMisinformation #AIErrors #TrustworthyAI #ExplainableAI #ResponsibleAI #AICredibility #AIMistakes #AIResearch #TechExplained #ComputerScience #DataScience #MLTheory #AcademicAI #AIPhilosophy #ScienceOfAI #AIPapers #PapersTutorial #PaperTutorial #AIExplained #FutureOfAI #AITutorial #TechTalk #AIInsights #AIForEveryone